Auto-generated

This file is compiled by

npm run compile-ba. Do not edit manually.

0.2 Abstract

1.0 Introduction

1.1. The Auditory Dual-Stream Framework

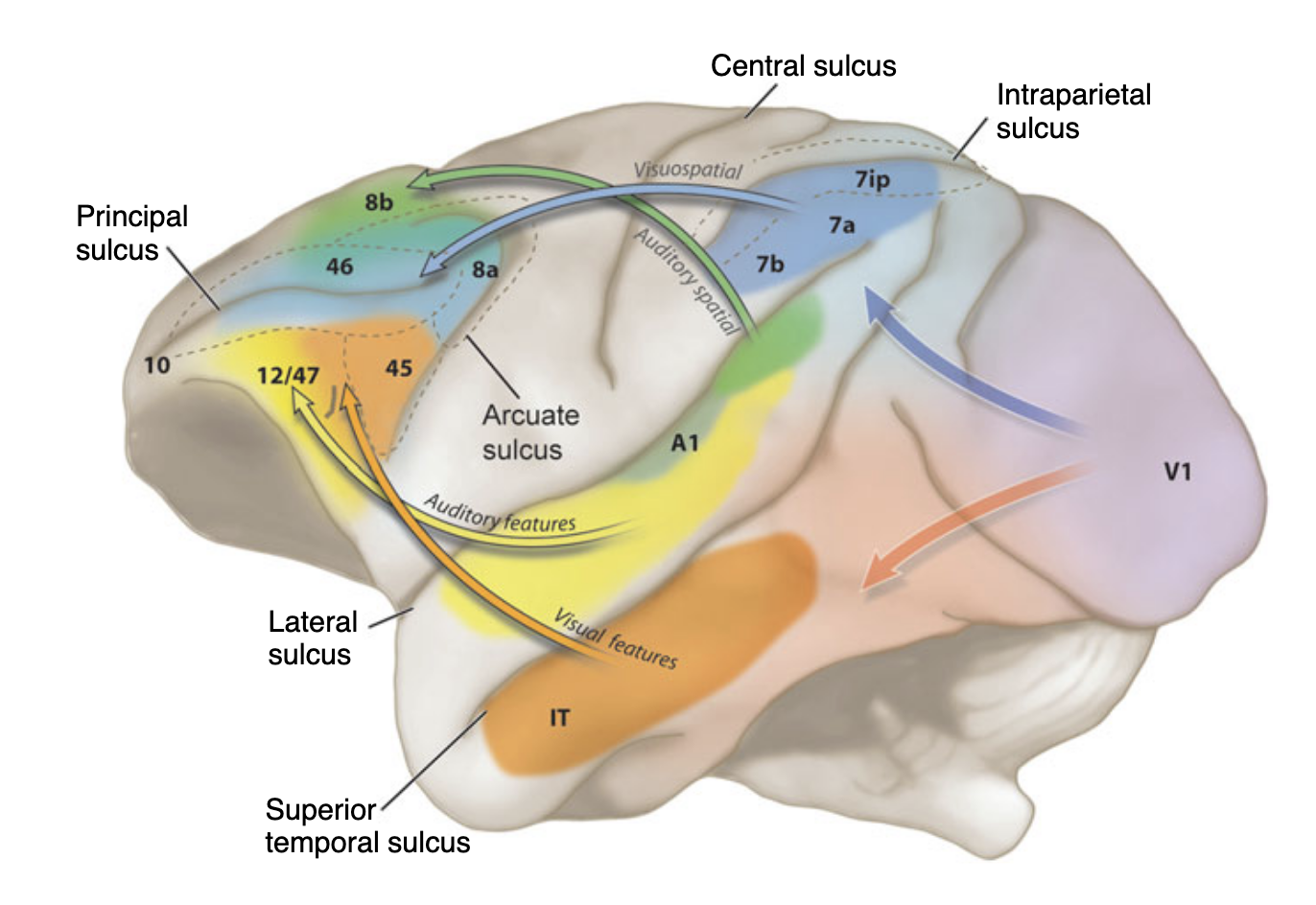

The prefrontal cortex not only is the most important brain region for decision making, but also serves as the main hub for top-down attention. When we decide to look at the squirrel sitting on the table, it is our prefrontal cortex that first decides and then initiates the directing of the eyes and also the visual system to attend to the squirrel. While focusing on the squirrel, we hear the squirrel making noises - we then attend to the auditory stimuli as well. The question still remains which parts of the prefrontal cortex direct attention to the auditory stream. My thesis will examine the scope in which the prefrontal cortex directs attention top-down in different modalities. But first, we need to dive into the existing literature about the visual stream hypothesis.

1.1.1 The Visual Two-Streams Hypothesis

Lesion studies in macaque monkeys by Ungerleider and Mishkin (1982) set the start in developing the concept of parallel processing streams of the visual system in the brain. They described two distinct pathways that both originate in the primary visual cortex (V1): a ventral stream projecting toward inferotemporal cortex, processing objects such as a squirrel or the table. This stream is also called the ‘what’-stream and decodes object identity. The second, the dorsal stream, projects toward posterior parietal cortex and processes motion and object locations - also called the ‘where’-stream. Goodale & Milner (1992) later refined this framework, proposing that the dorsal stream is primarily involved in guiding how to interact with objects - while the ventral stream serves object perception and recognition. For our study it is relevant to ask whether the ‘how’/‘where’-stream not only performs visuomotor, but also audiomotor control over downstream modalities.

Critical for our study is recent work by Bedini and Baldauf (2021), identifying prefrontal hubs that perform top-down control over each stream. Based on evidence from functional and structural connectivity, they demonstrated a clear dissociation in functional connectivity: The Frontal Eye Field (FEF) - a core node of the Dorsal Attention Network (DAN)- shows predominant coupling with regions of the dorsal visual ‘where’-stream, while the anterior Inferior Frontal Junction (IFJa) - part of the Frontoparietal Network (FPN) - couples preferentially with the ventral visual ‘what’-stream. This connectivity-based division of labour was later supported by resting-state MEG data showing the same dissociation in oscillatory coupling and top-down directionality (Soyuhos & Baldauf (2023)). Together, these findings draw a clear picture of functional specialisation in prefrontal top-down control.

1.1.2 From Wernicke’s Area to Auditory Dual-Stream Processing

In the auditory modality, the processing of input was thought to operate in a single cortical region, Wernicke’s area. It is located in the posterior left superior temporal gyrus (STG) and was considered the primary hub for auditory comprehension following Wernicke’s (1874) findings in aphasia - the missing ability to understand language. This model dominated neuroscience well into the twentieth century.

In the 1970s and 1980s, when neuropsychological evidence revealed that lesions to the left STG did not consistently produce comprehension deficits, but were instead more reliably associated with speech production deficits (Hickok & Poeppel 2007 - Nature). These findings changed the view, so that auditory processing could not be reduced to a single region and led to a fundamental re-evaluation of the cortical auditory organisation.

The current view, developed most influentially by Hickok & Poeppel (2004, 2007) and Rauschecker & Scott (2009), puts the organisation of the auditory cortex into two parallel processing streams analogous to those in the visual system. A posterodorsal stream projects from the superior temporal plane through parietal and premotor cortex and is associated with spatial processing and sensorimotor integration. An anteroventral stream projects from the STG forward through the temporal lobe toward inferior frontal regions and supports auditory object identification and semantic (speech) processing. Figure 1 illustrates this dual-stream architecture as proposed by Hickok & Poeppel (2007), mapping those two pathways onto a lateral view mainly of the left hemisphere. Section 3.2 ‘Selection of Regions of Interest’ will display in detail which regions most likely belong to which of both streams based on existing literature.

-Figure-1-dorsal-ventral-auditory-system-without-description.png)

Figure 1. Schema of the auditory dual-stream model (adapted from Hickok & Poeppel, 2007). The dorsal pathway (blue) extends from the superior temporal plane toward posterior parietal and premotor cortex. The ventral pathway (purple) projects anteriorly through the temporal lobe toward inferior frontal regions. Both streams originate in the primary auditory cortex on the supratemporal plane.

1.2 The Gap, Top-Down Control of Auditory Streams

In environments with competing sounds, the brain cannot attend to all auditory input simultaneously. Top-down attention functions as a control mechanism that filters relevant information and suppresses distractors - therefore focussing on few inputs in a goal-directed manner (De Vries & Baldauf (2021) - Journal of Neuroscience). The key question is: Which prefrontal regions act as the top-down controllers of these auditory streams?

The division into dorsal and ventral pathways is well-documented(Ahveninen et al. (2006) - PNAS, Hickok & Poeppel 2007 - Nature), which already sets the path for following the question about the top-down attention mechanisms. Existing work has identified IFG subregions as frontal nodes in auditory processing, particularly BA44 and BA45 within the semantic pathway (Rolls et al. (2023) - Cerebral Cortex) and BA44 also along the dorsal pathway for affective prosody (Frühholz (2015) - NeuroImage). Hickok & Poeppel 2007 - Nature described a dorsal pathway including the Spt - a region at the parietotemporal boundary within the Sylvian fissure - as a sensorimotor interface, connecting anteriorly to Broca’s region and premotor cortex. Hickok & Poeppel 2007 - Nature. However, these findings remain specific to the language-related dorsal prosody processing or the semantic ‘what’-stream. Especially the auditory ‘where’-stream lacks a clear prefrontal controller. The question of whether the FEF and IFJa also direct the auditory domain in a similar fashion to the visual system remains unanswered.

1.3 Hypothesis, A supramodal organisation

Following the thoughts if 1.1 and 1.2, we hypothesise that the resting-state functional connectivity will show a clear dissociation of the auditory ‘what’ and ‘where’-streams: the auditory ‘where’-stream preferentially connects to the Frontal Eye Field (FEF), and the auditory ‘what’-stream to the anterior Inferior Frontal Junction (IFJa).

If confirmed, this dissociation would provide evidence for a supramodal organisational principle of the prefrontal cortex, with FEF and IFJa acting as domain-general hubs for top-down attentional control across sensory modalities (Spagna et al. (2015)). This study tests a first step toward that larger claim by examining whether FEF and IFJa connectivity patterns in the auditory domain match those established for vision.

2.0 Theoretical Background

The auditory dual-stream framework proposes that sound processing diverges into two functionally and anatomically distinct pathways after being processed by the primary auditory cortex.

The ventral ‘what’-stream decodes object identity and the dorsal ‘where’-stream processes spatial localisation and sensorimotor integration. This organisation mirrors the well-established visual dual-stream architecture. Romanski (2004) demonstrated in non-human primates that this division is not modality-specific, but ‘domain’-specific: the dorsolateral prefrontal cortex (dlPFC), including areas 46, 8a and 8b, receives converging spatial input from both posterior parietal (visual) and caudal superior temporal (auditory) cortices, while the ventrolateral PFC (vlPFC), including areas 45 and 12, receives object-related input from inferotemporal and anterior auditory association cortices (Figure 1, Romanski 2004; Romanski et al. (1999) - Nature Neuroscience, originally based on Arnsten (2003)). This domain-specific principle provides the theoretical foundation for the present thesis. The mapping of these macaque pathways onto human prefrontal architecture - specifically the FEF for the dorsal stream and the IFJa for the ventral stream - is elaborated in Sections 2.1. and 2.2, grounding on neuroimaging evidence (Bedini & Baldauf (2021)).

2.1 The Auditory What (“Ventral”) Stream

The auditory ‘what’-stream represents the more conceptually stable part of the dual-stream architecture, mostly tasked with transformation from auditory signals to semantic representations. The ‘what’-stream’s role in mapping sounds to meaning remains widely accepted as fundamental. This chapter discusses existing literature about the auditory ventral ‘what’-stream.

2.1.1 Grouping by Rolls (2022)

Throughout this thesis, I will refer to the groups proposed by Rolls et al (2022), that identified three large-scale networks using effective connectome analysis. These groups provide an anatomical reference for the regions discussed throughout the thesis. Their Group 1 consists of inferior STS regions (STSva, STSvp), anterior inferior temporal cortex (TE1a), temporal pole (TGd) and parietal PGi, aligning closely with the ventral stream described here. This group has effective connectivity (EC) directed toward Broca’s areas (44, 45, 47s), receiving input from high-level areas, has EC with memory-related regions, with the frontal pole and EC directed to parts of Group 2. This group includes a large frontal system with regions 44, 45, 47l, SFL and 55b, also including inferior temporal region TGv. This group has robust EC with superior (STSda, STSdp, STGa) and inferior regions as STSvp and the Peri-Sylvian Language Area (PSL). Finally, Group 3 is centered around superior temporal lobe and the temporoparietal junction with areas A5, STGa, STSda, STSdp, PSL, STV and TPOJ1. They show EC with some visual and auditory areas (Belt regions, A1, A4, A5), also with the frontal operculum and has strong EC directed towards 44 and 45.

2.1.2 Functional Definition

The primary objective is auditory object identification - the process of isolation of what is being heard (e.g. specific words, a dog’s bark or a piano) from a chaotic acoustic environment.

It operates as a sound-to-meaning starting with feature-extraction in the primary auditory cortex, which responds well to pure tones (Rauschecker & Afsahi (2023) - Journal of Comparative Neurology). Top-down attention in the ‘what’-pathway is mediated by the IFJ, mainly the anterior part. The IFJ is located at the junction of the inferior precentral sulcus (iPCS) and the inferior frontal sulcus (IFS), corresponding to BA6, BA8 and BA44 (Bedini & Baldauf (2021)). The IFJ is subdivided into an anterior and a posterior part:

- anterior Inferior Frontal Junction (IFJa): Unlike the FEF, the IFJa processes non-spatial, object-based information and is part of the Frontoparietal Network (FPN) and functions as a mediator of top-down, feature-based attention, particularly in language-related networks (Ji et al., 2019, as cited in Bedini & Baldauf, 2021). It is also involved in working memory, and may encode information at a higher level of abstraction than the FEF. It can modulate DAN activity according to current task demands and toggle between DAN and VAN activity. Critically, IFJa receives EC from Groups 2 and 3 (Rolls et al., 2022), but not from Group 1 - suggesting that IFJa is preferentially accessed by frontal language output regions and superior temporal auditory areas, but not by the inferior STS network.

- posterior Inferior Frontal Junction (IFJp - Control Seed): While the majority of neuroimaging literature treats the IFJ as a monolithic unit, recent structural and functional evidence suggests a clear dissociation between its anterior (IFJa) and posterior (IFJp) subdivisions (Bedini (2023) - Brain Structure). IFJp acts as a core node of the multiple-demand system, activated by generalised executive tasks (e.g., reasoning, math). We included IFJp specifically as a control region to validate the target specificity of the IFJa towards the auditory what-pathway.

2.1.3 Anatomical Trajectory

The anteroventral auditory ‘what’-stream originates in the Herschl’s Gyrus, extending through the Superior Temporal Gyrus (STGa), and along the Superior Temporal Sulcus (STS), Middle and Inferior Temporal Gyrus, to the anterior inferior frontal gyrus, the IFG (44, 45, 47l) (Ahveninen et al., 2006; Frühholz, 2015). Additionally, Soyuhos & Baldauf (2023) demonstrated that anterior IFJ has strong power coupling with the temporal cortex in delta and gamma frequencies.

2.1.4 Differences in Lateralisation

The auditory ‘what-stream shows a left hemispheric dominance for language-related processing. Rolls (2022) - NeuroImage reports stronger left-hemispheric EC across what-stream regions STS, temporal pole, PGi and inferior frontal regions 44, 45 and 47l. The right hemisphere, however, still plays a complementary role in emotional and prosodic aspects of speech, activating right STG and IFG (Frühholz (2015) - NeuroImage), suggesting a division of labour in which the left hemisphere dominates semantic processing and the right contributes to the affective dimension of auditory objects.

2.2 The Auditory Where (“Dorsal”) Stream

In contrast to the ventral ‘what’-stream, the dorsal pathway has no single, universally accepted functional definition. Depending on the theoretical framework, the dorsal stream has been characterised as a spatial localisation system (Rauschecker & Scott (2009)), a sensorimotor integration pathway for speech (Hickok & Poeppel (2007)), or a system for processing affective prosody (Frühholz (2015)). These accounts are not mutually exclusive — they reflect different levels of description of the same anatomical pathway. For the purposes of this thesis, the dorsal stream is treated as a spatial-motor system subserving both spatial orienting and audiomotor integration, with the FEF as its prefrontal top-down control hub.

2.2.1 Spatial Processing and Audio-Motor Integration

The spatial-motor characterisation of the dorsal stream focuses on converging evidence across species and methodologies. Early support came from non-human primate studies demonstrating posterior auditory cortex sensitivity to sound location (Rauschecker & Afsahi (2023)), complemented by neuroimaging showing right-lateralized temporo-parietal activation for spatial processing (Griffiths et al. (1998)) and the clinical observation that spatial neglect is more severe after right-hemisphere damage.

Ahveninen et al. (2006) extended this account by reporting a double dissociation between anterior ‘what’ and posterior ‘where’ processing, with dorsal stream responses emerging at 70–150ms post-stimulus onset. Rauschecker (2011) further noted a cross-species shift: whereas non-human primates recruit the dorsal stream primarily for spatial localisation, humans appear to repurpose it for sensorimotor integration in speech.

This sensorimotor dimension was elaborated by Hickok and Poeppel (2004, 2007), who proposed that the dorsal stream maps acoustic speech signals onto articulatory representations, projecting from the STG via the Sylvian parietal temporal area (Spt) to frontal regions including Broca’s area (pars opercularis, Area 44, and pars triangularis, Area 45). Rather than contradicting the spatial account, this suggests that the dorsal pathway supports a broader spatial-motor function — localizing sounds in space and coupling that information with motor action plans.

2.2.2 Anatomical Definition and Evidence

The auditory dorsal “where”-stream originates posterior to Herschl’s Gyrus in the planum temporale and STG (Ahveninen et al. (2006)), and extends through auditory association areas before reaching parietal and frontal regions. A4 and A5 have EC to motion-sensitive areas MT and MST, which connect to superior parietal regions (7AL, 7Am, 7PC), consistent with a dorsal “where”-stream (Rolls et al. (2023)).

The Frontal Eye Field (FEF), located in the caudal middle frontal gyrus ventral to the junction of the superior precentral sulcus (sPCS) and superior frontal sulcus (SFS), serves as a primary prefrontal node of the dorsal stream. The FEF contains a full topographic map of contralateral space, predominantly encodes spatial information (cited from Bedini (2023): Wang et al., 2015) and is a core node of the Dorsal Attention Network (DAN), mediating covert spatial attention, oculomotor control, and spatial working memory (Bedini & Baldauf (2021)). It is structurally connected via SLF1 and SLF2 to posterior parietal (LIPd) and temporoparietal cortices, embedding FEF anatomically in the “where”-stream.

The inferior parietal cortex (specifically PF, PFop and PFcm) corresponds to the gyrus of the classical IPL (Baker (2018)) and forms a critical relay station within the “where”-stream. Consistent with both Rauschecker & Scott (2009) and Hickok & Poeppel (2007)/Hickok & Poeppel (2004), the “where”-pathway projects from posterior superior temporal cortex (pST) through parietal cortex before reaching prefrontal regions. These inferior parietal areas function as higher-level coordinator between auditory and somatosensory areas such as OP1-4 and FOP1 (Glasser et al. (2016)). Additionally, posterior parietal areas may serve as a relay station to the premotor cortex (PMC).

Importantly, the IPL does not appear to be driven by acoustic stimuli (Rauschecker & Scott (2009)), rather the angular gyrus is involved in higher-order speech processing - along with clear prefrontal activation. This suggests, the inferior parietal cortex is involved in domain-general, language-related speech comprehension, rather than low-level auditory processing (Rauschecker & Scott (2009)).

Finally, superior parietal regions 7AM, 7PC, 7AL receive direct auditory input from A4 and PBelt and may support tracking moving objects in space (Rolls et al. (2023)).

2.2.3 FEF’s Role in Auditory Spatial Attention

Salmi (2009) demonstrated FEF and PMC activation during both top-down controlled and bottom-up triggered shifting of auditory spatial attention. In their study, participants had to selectively attend to simultaneous tone streams at the left or right, occasionally shifting attention either in response to a visual cue (top-down, cue-guided attention shifts, CAS) or to loudness-deviating tones in the ignored stream (bottom-up, LDTs). Top-down controlled shifts activated bilateral FEF/PMC alongside SPL, IPS, TPJ, and IFG/MFG. Bottom-up triggered shifts activated an overlapping network - vmSPL, left IFG/MFG, left FEF/PMC, and right TPJ (Salmi, 2009) - showing that FEF recruits for auditory orienting tasks.

Considering the behaviour, deviants in the to-be-ignored-stream extended the response times, while deviants in the to-be-attended stream shortened them. This indicates that auditory bottom-up attention is modulated by the current focus of spatial attention. Interestingly, the same group had previously shown that shifting spatial attention in audition and vision recruits overlapping IPS/SPL and FEF/PMC regions Salmi (2007).

These findings suggest that FEF not only acts as a modality-specific oculomotor controller but might also follow a modality-independent principle, which the present thesis examines directly.

2.2.4 The “Where”-Stream as a Spatial-Motor System

Taken together, the evidence reviewed in 2.2.1, 2.2.2 and 2.2.3 suggests that the auditory “where”-stream does not map on one functional label only, but rather has a spatial-processing (Rauschecker & Scott (2009)) and a sensori-motor-integration (Hickok & Poeppel (2007)) function and reflect different levels within the same dorsal pathway.

Spatial processing in the temporo-parietal cortex is predominantly right-lateralized, consistent with the well-documented asymmetry of spatial neglect in patients with right-hemispheric damage (Rauschecker & Scott (2009)). In contrast, Hickok & Poeppel’s dorsal language model that links area Spt to Broca’s area is strongly left-dominant (Hickok & Poeppel (2007)). This hemispheric dissociation suggests that the “where”-stream operates in parallel in both hemispheres with different functional emphases: right-lateralized spatial and motion processing, and left-lateralized sensorimotor integration.

Further findings support this account, with caudal auditory belt area receiving somatosensory input (Rauschecker & Scott (2009)) and the inferior parietal cortex - especially PF, PFcm, PFop - serving as an interface between auditory, somatosensory and motor signals.

A related question concerns the dorsal language pathway, Rolls et al. (2023) report that connections of PBelt, A4 and A5 with BA44 may form a language-related dorsal pathway. However, this is not in conflict with a spatial-motor property: The same stream seems to perform spatial processing and sensorimotor feedback in the right hemisphere and articulatory and phonological processing on the left.

For the purpose of this thesis, the “where”-stream is therefore treated as a spatial-motor system: primarily organised around spatial and motor processing, with a left-hemispheric component that extends into speech production. The FEF serves as a primary prefrontal node, embedded anatomically in the DAN and structurally connected to the posterior parietal regions.

3.0 Methods

3.1 Data Acquisition & Preprocessing

Dataset and Participants. This thesis utilized resting-state functional magnetic resonance imaging (rs-fMRI) data from the S1200 release of the Human Connectome Project (HCP; Wu-Minn HCP Consortium, 2018). To ensure high data quality and completeness, a strictly defined sub-group of healthy young adult subjects was selected. This specific cohort was chosen because these subjects completed all four rs-fMRI runs, and their data were reconstructed using the latest r227 reconstruction algorithm, which corrects for specific phase-encoding artefacts present in earlier releases.

Data Acquisition. Imaging data were acquired using a customized Siemens 3T Connectome Skyra scanner. The rs-fMRI data were collected utilizing a gradient-echo EPI sequence with the following standard HCP parameters: a repetition time (TR) of , an echo time (TE) of , a flip angle of , and an isotropic spatial resolution of . Each subject completed two sessions on separate days. Each session consisted of two 15-minute runs with opposing phase-encoding directions (Right-to-Left and Left-to-Right), resulting in 1200 frames per run and a total of 4800 time points per subject. During the acquisition, subjects were instructed to keep their eyes open and maintain fixation on a red crosshair on a dark background.

Preprocessing. The raw fMRI data underwent preprocessing via the standardized HCP Minimal Preprocessing Pipelines. This first pipeline performs spatial distortion correction, motion correction, and registration to the structural final brain mask by reducing the bias field and normalisation. Subsequently, the data were transformed from native mesh to fs_LR registered 32k mesh (2mm average vertex spacing).

To account for noise and motion artefacts, the data were denoised using ICA-FIX (Independent Component Analysis-based X-noiseifier). This automated method decomposes the data into independent components and regresses out those identified as structured noise (e.g., cardiac, respiratory, and head movement artefacts) without applying aggressive global signal regression.

3.2 Selection of Regions of Interest (ROIs)

To test the hypothesis of supramodal prefrontal control over auditory processing, a comprehensive set of 36 target ROIs and 2 primary seed regions (plus one control seed) per hemisphere was defined. All regions were identified utilizing the multimodal cortical parcellation (HCP-MMP1.0) by Glasser et al. (2016) - Nature. This atlas provides superior neuroanatomical precision by integrating structural, functional, and connectivity data.

The selected ROIs are categorized into functional networks reflecting the auditory dual-stream architecture and their respective prefrontal controllers. The large sample size (N=812) provides sufficient statistical power to detect moderate connectivity effects across all ROI pairs after multiple comparison correction. Core acoustic regions (A1, MBelt, LBelt, area 52) were intentionally excluded from the ROI set to prevent multicollinearity in the partial correlation matrix and to focus the analysis on the hierarchical level at which the divergence into ‘what’ and ‘where’ streams first occurs (PBelt, A4, A5). In general, ROI selection relies on the previous literature established in Section 2.

3.2.1 The HCP-MMP1 Atlas

Before defining specific regions, the choice of the underlying atlas must be justified. Unlike traditional parcellations based solely on cytoarchitecture (e.g., Brodmann areas), the HCP-MMP1.0 atlas integrates four distinct modalities: 1. Cortical Myelin Content: Identified via T1w/T2w ratios. 2. Cortical Thickness: Measuring structural differences. 3. Task-fMRI Activation: Pinpointing functional hubs. 4. Resting-State Functional Connectivity: Mapping intrinsic networks.

This multimodal approach is essential for this study because it allows for the differentiation of functionally distinct areas that appear homogenous in classical maps. For instance, it enables the isolation of the IFJa from the surrounding prefrontal cortex, which is important for the auditory dual-stream architecture.

3.2.2 Prefrontal Seed Regions (The Conductors)

We selected distinct prefrontal control hubs based on the functional dissociation previously established in the visual system by Bedini & Baldauf (2021). This selection tests whether these visual control architectures map onto auditory processing.

FEF (Frontal Eye Field). A core node of the Dorsal Attention Network (DAN), hypothesised to exert top-down control over the spatial auditory “Where”-stream.

IFJa (Anterior Inferior Frontal Junction). Part of the Frontoparietal Network (FPN), hypothesised to mediate feature-based attention and semantic processing within the auditory “What”-stream Bedini & Baldauf (2021).

IFJp (Posterior Inferior Frontal Junction) --- Control Seed. Included strictly as a methodological baseline. IFJp is associated with the Multiple-Demand (MD) system for general-purpose executive tasks Bedini & Baldauf (2021). Factoring out its variance allows for a double dissociation, ensuring that the observed control over the auditory network is highly specific to the IFJa.

3.2.3 The Dorsal Pathways (Where & How)

The dorsal pathways process spatial localisation (Where) and sensorimotor integration (How) (Hickok & Poeppel 2007 - Nature).

3.2.3.1 The Spatial-Orienting Network (Where-Stream)

This network tracks moving auditory objects and directs spatial focus.

7AL, 7Am, 7PC. Superior parietal areas receiving direct input from early auditory regions, crucial for auditory spatial attention and motion tracking Rolls et al. (2023) - Cerebral Cortex.

PF, PFop. These areas correspond to the IPL and play an important role in the dorsal auditory pathway (Rauschecker & Scott (2009) - Nature Neuroscience).

PFcm. Defined by Glasser et al. (2016) - Nature as a heavily myelinated inferior parietal region with strong somatosensory properties along with OP1-4 and FOP1. Although recent evidence indicates a lack of direct auditory responsiveness (Dureux et al., 2024), it was strategically included due to its anatomical position bridging the auditory-motor interface (OP4) and multimodal integration hubs (PSL).

MT, MST. Classical supramodal motion hubs. They receive effective connectivity from auditory areas (A4/A5) to enable the tracking of auditory movement in space Rolls et al. (2023) - Cerebral Cortex.

3.2.3.2 The Motor Interface (How-Stream)

This interface translates auditory representations into articulatory motor plans (Sound-to-Motor mapping, Hickok & Poeppel (2004) - Cognition, Hickok & Poeppel 2007 - Nature)

55b. A premotor hub exhibiting responses to vocal stimuli being assigned to a language area. Interesting because 55b is a neighbour of the prefrontal seed region FEF and Glasser et al. (2016) - Nature; Dureux (2024).

44 (Broca’s pars opercularis). According to Rolls et al. (2023) - Cerebral Cortex Area 44 might be forming a dorsal auditory pathway with PBelt, A4, A5 and motion areas MT and MST.

OP4, FOP1, FOP2, FOP3, 43. According to Frühholz the frontal operculum is connected to posterior STG and anterior IFG via dorsal pathways Frühholz (2015) - NeuroImage. OP4 specifically demonstrates exclusive sensitivity to human vocalisations Dureux (2024). Area 43 was included due to its anatomical position between the FOP and premotor cortex, potentially serving as an interface along the auditory-motor-pathway.

SCEF (Supplementary and Cingulate Eye Field). A medial frontal cingulate area anatomically adjacent to the FEF. Despite responding almost exclusively to vocalisations (Dureux (2024)), its medial frontal anatomy and oculomotor system affiliation place it within the dorsal network. Dureux (2024) further groups SCEF functionally with premotor area 55b, OP4, and Broca’s areas 44 and 45 in the same vocalisation-selective cluster.

3.2.4 The Ventral Pathways (What)

The ventral stream decodes auditory object identity, semantics, and speech perception.

3.2.3.1 Ventral Semantic and Identity Network

STGa, STSda, STSdp, STSva, STSvp, TA2. The semantic core of the ventral pathway processing complex auditory objects. The STS complex integrates vocal inputs with facial motor representations to decode identity and message (Glasser et al. (2016) - Nature, Rolls (2022) - NeuroImage, Rolls et al. (2023) - Cerebral Cortex).

AVI. The Anterior Ventral Insula shows activations to auditory stimuli alongside inferior frontal regions 55b, IFJp, IFJa, IFSp, 44, 45, and OP4. AVI exhibits highly specific capabilities in distinguishing vocalisations from noise, extending the semantic network into insular evaluation regions Dureux (2024).

45, 47l, IFSp. The frontal termini of the ventral meaning pathway. Area 45 (Broca’s pars triangularis) and 47l handle semantic selection, while IFSp shows responses to vocalisation along with 44, 45, IFJp, OP4 and 55b; Rolls et al. (2023) - Cerebral Cortex, Dureux (2024).

3.2.4.2 Anterior Temporal Semantic Regions

TE1a, TGd, TGv (Temporal Pole). Following the effective connectivity analysis of Rolls (2022) - NeuroImage, these areas represent the semantic integration hubs of the temporal lobe. We intentionally differentiated between these regions to test the specificity of prefrontal control. TE1a & TGd (Group 1) are associated with an inferior, visual-semantic system; their lack of effective connectivity with the IFJa makes them ideal candidates to evaluate the boundaries of the IFJa-controlled auditory network. TGv (Group 2) is integrated into a frontal system involving speech production and syntax; its robust connectivity with the IFJa, FEF, and Area 55b makes it an interesting target for top-down modulation during linguistic and executive tasks.

3.2.5 Hierarchical Gateways and Connectors

These regions serve as routing hubs and convergence zones between early acoustic analysis and higher-order integration.

PBelt, A4, A5. The primary “routers” exiting the auditory core. PBelt and A4 show effective connectivity to parietal regions 7AL, 8AM, 7PC. Rolls et al. (2023) - Cerebral Cortex suggests that PBelt, A4 and A5 might form a language-related dorsal pathway, adding them to the auditory where-stream. Glasser et al. (2016) - Nature parcellation on the other hand places A4 and A5 together with STSdp, STSda, STSvp, STSva, STGa, and TA2 in a region naming them auditory association cortex. This area rather belongs to the ventral what-stream, making a clear classification difficult. Ambiguities will be resolved and discussed in chapters 4.4 and 5.4.1.

PGi. Effective connectivity to semantic areas as STS, TGv, TGd and TE1a place PGi in the auditory what-pathway. Rolls (2022) - NeuroImage places PGi in Group 1 along with STSva, STSvp and TE1a and TGd.

PSL, STV, TPOJ1. Multimodal convergence zones (Group 3 networks) bridging auditory semantics with visual and somatosensory inputs (Rolls (2022) - NeuroImage, Rolls et al. (2023) - Cerebral Cortex) showing effective connectivity with PBelt, A4 and A5. While PSL is anatomically integrated into the semantic network, functional models suggest it acts as an abstract linguistic interface rather than a primary auditory responder Dureux (2024). That’s why we placed these regions in the semantic ‘what’-pathway for the following analyses. TPOJ1 shows weak responses to non-vocal sounds according to Dureux (2024).

3.2.6 Tables

Table 1: Prefrontal Seed Regions

| Kürzel | Voller Name | Location (Stream) | Quelle |

|---|---|---|---|

| FEF | Frontal Eye Field | Prefrontal (Dorsal Attention) | Bedini & Baldauf (2021); Salmi et al. (2009) |

| IFJa | Anterior Inferior Frontal Junction | Prefrontal (Frontoparietal) | Bedini & Baldauf (2021) |

| IFJp | Posterior Inferior Frontal Junction | Prefrontal (Multiple-Demand) | Bedini & Baldauf (2021) |

Table 2: Where & How Stream (Dorsal)

| Kürzel | Voller Name | Location (Stream) | Quelle |

|---|---|---|---|

| 7AL | Area 7 Anterior Lateral | ’Where’ (Dorsal) | Rolls et al. (2023) |

| 7Am | Area 7 Anterior Medial | ’Where’ (Dorsal) | Rolls et al. (2023) |

| 7PC | Area 7 Posterior Capsular | ’Where’ (Dorsal) | Rolls et al. (2023) |

| A4 | Auditory Area 4 | ’Where’ (Dorsal) | Rolls et al. (2023) |

| PBelt | Parabelt Complex | ’Where’ (Dorsal) | Rolls et al. (2023) |

| MT | Middle Temporal Area | ’Where’ (Dorsal) | Rolls et al. (2023) |

| MST | Medial Superior Temporal Area | ’Where’ (Dorsal) | Rolls et al. (2023) |

| PF | Area PF (Inferior Parietal) | ‘Where’ (Dorsal) | Baker (2018) |

| PFcm | Area PF Complex Medial | ’Where’ (Dorsal) | Glasser (2016) |

| PFop | Area PF Opercular | ’Where’ (Dorsal) | Rauschecker & Scott (2009) |

| 43 | Area 43 | ’How’ (Dorsal) | Frühholz (2015) |

| 44 | Area 44 (Pars Opercularis) | ‘How’ (Dorsal) | Rolls et al. (2023) |

| 55b | Area 55b | ’How’ (Dorsal) | Dureux (2024) |

| FOP1-3 | Frontal Operculum 1, 2, 3 | ’How’ (Dorsal) | Frühholz (2015) |

| OP4 | Frontal Opercular Area 4 | ’How’ (Dorsal) | Dureux (2024) |

| SCEF | Supp. & Cingulate Eye Field | ’How’ (Dorsal) | Dureux (2024) |

Table 3: What pathway (ventral)

| Kürzel | Voller Name | Location (Stream) | Quelle |

|---|---|---|---|

| 45 | Area 45 (Pars Triangularis) | ‘What’ (Ventral) | Rolls et al. (2023) |

| 47l | Area 47 Lateral | ’What’ (Ventral) | Rolls et al. (2023) |

| A5 | Auditory Area 5 | ’What’ (Ventral) | Rolls et al. (2022), Glasser (2016) |

| AVI | Anterior Ventral Insula | ’What’ (Ventral) | Dureux (2024) |

| IFSp | Inferior Frontal Sulcus Post. | ‘What’ (Ventral) | Dureux (2024) |

| PGi | Area PGi (Inferior Parietal) | ‘What’ (Ventral) | Rolls (2022) |

| PSL | Perisylvian Language Area | ’What’ (Ventral) | Rolls (2023) |

| STGa | Superior Temporal Gyrus Ant. | ‘What’ (Ventral) | Glasser (2016) |

| STSda/dp | STS Dorsal Ant. / Post. | ‘What’ (Ventral) | Rolls et al. (2023) |

| STSva/vp | STS Ventral Ant. / Post. | ‘What’ (Ventral) | Glasser (2016) |

| STV | Superior Temporal Visual Area | ’What’ (Ventral) | Rolls (2023) |

| TA2 | Area TA2 | ’What’ (Ventral) | Glasser (2016) |

| TE1a | Area TE1 Anterior | ’What’ (Ventral) | Rolls (2022) |

| TGd / TGv | Temporal Gyrus Dor. / Ven. | ‘What’ (Ventral) | Rolls (2022) |

| TPOJ1 | Temp.-Par.-Occ. Junction 1 | ’What’ (Ventral) | Rolls (2023) |

3.3 Matrix Construction

3.3.1 Functional Connectivity

Resting-state functional connectivity (RSFC) is defined as the temporal correlation between neurophysiological events in spatially distinct brain regions Friston (1994). In this study, RSFC is operationalized as the statistical dependency between BOLD (Blood Oxygen Level Dependent) signal time series, reflecting the intrinsic functional architecture of the brain during resting-state Biswal (1995). Unlike EC, which models directed causal influences, RSFC is a symmetric, undirected measure that captures the degree to which two regions show correlated activity over time, independent of any explicit task or stimulus.

3.3.2 Time Series Extraction

We utilized the preprocessed dense CIFTI timeseries from the HCP S1200 release. For each subject, the BOLD signal was spatially averaged across all vertices within each of the 360 parcels (180 per hemisphere) of the HCP MMP1 atlas (Glasser et al. (2016) - Nature). Mean BOLD time series were extracted for all seed regions (FEF, IFJa, IFJp) [WELCHE noch? 55b, 44, 45?], as well as all auditory target regions defined in section 3.2.

3.3.3 Connectivity Matrix Construction

Pairwise RSFC values were computed as Pearson correlation coefficients between the extracted BOLD time series of each seed region and all parcels of the HCP-MMP1 atlas - consistent with the HCP’s own connectivity analyses (Glasser et al. (2016) - Nature). This led to a connectivity vector for each subject representing the full RSFC profile for each seed across the cortex. All matrix construction steps were implemented using a custom MATLAB toolbox developed within the Baldauf Research Group. [TOOLBOX Ref - Daniel fragen]

3.3.4 Single Seed vs. Contrast Analysis

We used two complementary analysis modes. In the single-seed analysis, the functional connectivity of a given seed region (FEF or IFJa) was assessed against the mean whole-brain connectivity of that subject, detecting parcels that are significantly more correlated with the seed region than the average cortical region. In the contrast analysis, the connectivity profiles of two seed regions were directly compared, revealing parcels with preferential connectivity to one seed over the other.

3.3.5 Partial vs Full Correlation

When characterizing functional brain networks, a critical distinction needs to be made between full and partial correlation. Full Correlation calculates the pairwise statistical relationship between two regions A and B without controlling for other variables. Because any two regions that both correlate with a third region C will appear correlated with each other - therefore displaying indirect correlation without true connectivity. Partial Correlation on the other hand, estimates the relationship between A and B after regressing out the shared variance to all other simultaneously measured regions (e.g. region C, Marrelec (2006) - NeuroImage, Smith (2011)). As a result, the partial correlation connectome is considerably sparser than its full correlation counterpart, while retaining the most direct functional connections (Glasser et al. (2016) - Nature).

In this study, both measures are applied: full correlation provides a global view of each seed’s connectivity landscape, while partial correlation isolates direct connections after filtering out contributions from the other regions included in the analysis.

3.3.6 Statistical Testing: Wilcoxon Signed-Rank Test & FDR Correction

Statistical significance of RSFC estimates was assessed using the paired Wilcoxon signed-rank test, an alternative to the Gaussian-based paired t-test. This test was chosen because RSFC values derived from Pearson correlations do not necessarily follow a Gaussian distribution across subjects (Soyuhos & Baldauf (2023)) For the single-seed analyses, a one-tailed test was applied, evaluating whether connectivity with the seed region exceeded the mean whole-brain connectivity. For contrast analyses, a two-tailed test was used to determine whether connectivity was significantly higher for one seed than for the other.

Each seed region (FEF or IFJa) was tested independently; their connectivity profiles were not averaged before statistical testing, preserving each region’s functional fingerprint.

To control for the elevated risk of false positives arising from testing all 360 cortical parcels simultaneously, all p-values were corrected for multiple comparisons using the False Discovery Rate (FDR) procedure (Benjamini, Y., & Hochberg, Y. (1995)). In contrast to the more stringent Bonferroni correction, FDR correction maintains higher statistical power by controlling the expected proportion of false positives among all rejected null hypotheses, rather than the probability of any single false positives. A significance threshold of q < 0.05 (FDR-corrected) was applied in all analyses.

For visualisation, significant p-values were converted to z-scores. The z-scores were summed across all seeds for which a given parcel reached significance, and then divided by the total number of seeds showing significance for that parcel. The normalisation generates a score that weights connectivity strength by consistency of the effect across seeds. Alongside z-scores, we report the mean partial correlation value () for each significant ROI pair, averaged across subjects, as a group-level effect-size index. The z-score captures statistical consistency across subjects; captures the actual magnitude of coupling. Connections with despite a high z-score reflect statistically consistent but functionally negligible coupling and are treated as such in the interpretation. For contrast analyses, the equivalent metric is the mean difference in partial correlation between the two seeds ().

3.4 Brain-Behaviour Correlation

To validate our RSFC patterns performed in Section 4, we assessed whether individual differences in RSFC could predict performance on behavioural tasks that specifically test the auditory ‘what’ and ‘where’ pathways. This approach links the network architecture observed during rest to cognitive function during task engagement, contributing validity for the supramodal organisation hypothesis.

3.4.1 Behavioural Subject Subsample

For the connectome-based predictive modelling, we used behavioural data of N = 371 participants from the HCP S1200 release. While the full resting-state cohort counts participants, restricting the behavioural analyses to the smaller subset guarantees complete data across all evaluated tasks. For each task, subjects were additionally excluded if they exceeded a head motion threshold of 0.15 mm framewise displacement or had missing behavioural scores, resulting in slightly varying effective sample sizes per analysis (see sections 3.4.2.1 and 3.4.2.2).

3.4.2 Behavioural fMRI Tasks

Three fMRI tasks were selected to evaluate the auditory dual-stream framework, defined in our study. The tasks were selected to enable a functional dissociation between semantic processing ‘what’, auditory-spatial decoding ‘where’, and low-level acoustic filtering, while acknowledging the limitations of the HCP task set.

3.4.2.1 Language Task (Story Comprehension)

In the story condition of the HCP Language Task, participants listened to short auditory stories adapted from Aesop’s fables, followed by a two-alternative forced-choice (2-AFC) question testing the semantic content of the stories (Binder (2011), Barch et al. (2013)). Two performance measures were extracted: Story Accuracy measures the proportion of correct responses, and since the vocabulary of the stories is simple, we observe a ceiling effect in which the sustained attention is measured rather than semantic access. However, Story Median Reaction Time captures the speed of semantic retrieval and therefore is used as the primary measurement for ventral ‘what’-stream efficiency. For this analysis, 20 subjects were excluded from the N = 371 subsample (18 due to head motion > 0.15 mm, 2 due to missing scores), yielding an effective N = 351. The Spearman correlation between behavioural scores and head motion was (), confirming that motion artefacts did not confound the results.

3.4.2.2 Working Memory Task (2-Back Place)

The HCP Working Memory Task consists of a visual N-Back design in which participants track sequential images and respond whether the current image matches the one presented two trials before (Barch et al. (2013)). The place subcondition, in which stimuli consisted of landscape and scene photographs, was extracted as a measure for visuo-spatial working memory (Barch et al. (2013)).

This task was chosen as the closest available test of spatial processing capacity, linked to the FEF’s dorsal stream. Because the HCP dataset does not include an auditory spatial task, this visual task tests the supramodal hypothesis. Participants might solve these tasks via object recognition rather than spatial navigation, which might reduce observed effects in the dorsal network. Additionally, since IFJa has been shown to be involved in working memory (Bedini & Baldauf (2021)), its connectivity may contribute to Place task predictions independently of any semantic strategy, making it difficult to cleanly attribute ventral model effects to a single mechanism. For this analysis, 19 subjects were excluded from the N = 371 subsample (18 due to head motion > 0.15 mm, 1 due to missing scores), yielding an effective N = 352. However, the Spearman correlation between behavioural scores and head motion was (), indicating a significant association between motion and Place Acc performance. This constitutes a potential confound and is discussed as a limitation in section 5.7.

3.4.2.3 Words-in-Noise (NIH Toolbox Noise Comparison)

The NIH Toolbox Words-in-Noise test assesses the ability to recognise spoken words in background noise by adaptively decreasing the signal-to-noise ratio across trials (Zecker (2013)). The task is a valid measure of low-level acoustic signal separation rather than high-level semantic processing. This test was included as a control paradigm to determine whether IFJa network connectivity generalises across auditory tasks or whether acoustic signal separation relies on a functionally different network.

3.4.3 Connectivity-Based Behavioural Prediction

The connectivity values were derived from partial correlation matrices computed across the N = 371 subsample, reflecting direct functional coupling between ROIs with shared variance removed. As behavioural scores were not normally distributed, Spearman’s rank correlation was used to identify predictive edges. A linear regression model was then fitted to predict behavioural performance from the summary connectivity score of each subject. Separate models were computed for the ventral ROIs subset and the dorsal ROIs subset, enabling predictions specific to the streams.

We evaluated model performance using leave-one-out cross-validation (K = effective N per task after exclusions; see Sections 3.4.2.1–3.4.2.2), providing an R value as the indicator of predictive accuracy. A significance threshold of p < 0.05 was applied for all tasks.

4.0 Results

Our analyses reveal a clear functional dissociation between the auditory where- and what-streams at the level of their prefrontal top-down regulators. Partial correlation of the two seed regions FEF and IFJa shows a distinct connectivity that aligns with the proposed supramodal organisation. The FEF is selectively coupled to spatial-parietal and motor circuits, while IFJa is preferentially connected to the ventral semantic network and the Broca complex. This double dissociation is further supported by seed-specificity validations (FEF vs. 55b, IFJa vs. IFJp) and by the analysis of Broca’s areas 44 and 45.

All z-scores reported below are from partial correlation analyses unless otherwise stated. Effect sizes are reported as , the mean partial correlation coefficient across subjects. Full correlation results are discussed only where they show meaningful contrasts compared to the partial correlation pattern.

4.1 Global Connectivity Patterns

Before examining each stream individually, we assess the overall functional dissociation between the auditory ‘what’ and ‘where’ streams. Using full and partial correlation, we compare the connectivity profiles of the two prefrontal seed regions FEF and IFJa, and validate their anatomical specificity against neighbouring control seeds.

4.1.1 FEF vs. IFJa: Validation of Prefrontal Seed Regions

To validate the anatomical specificity of our seed regions, we compared their partial correlation connectivity fingerprints across both hemispheres.

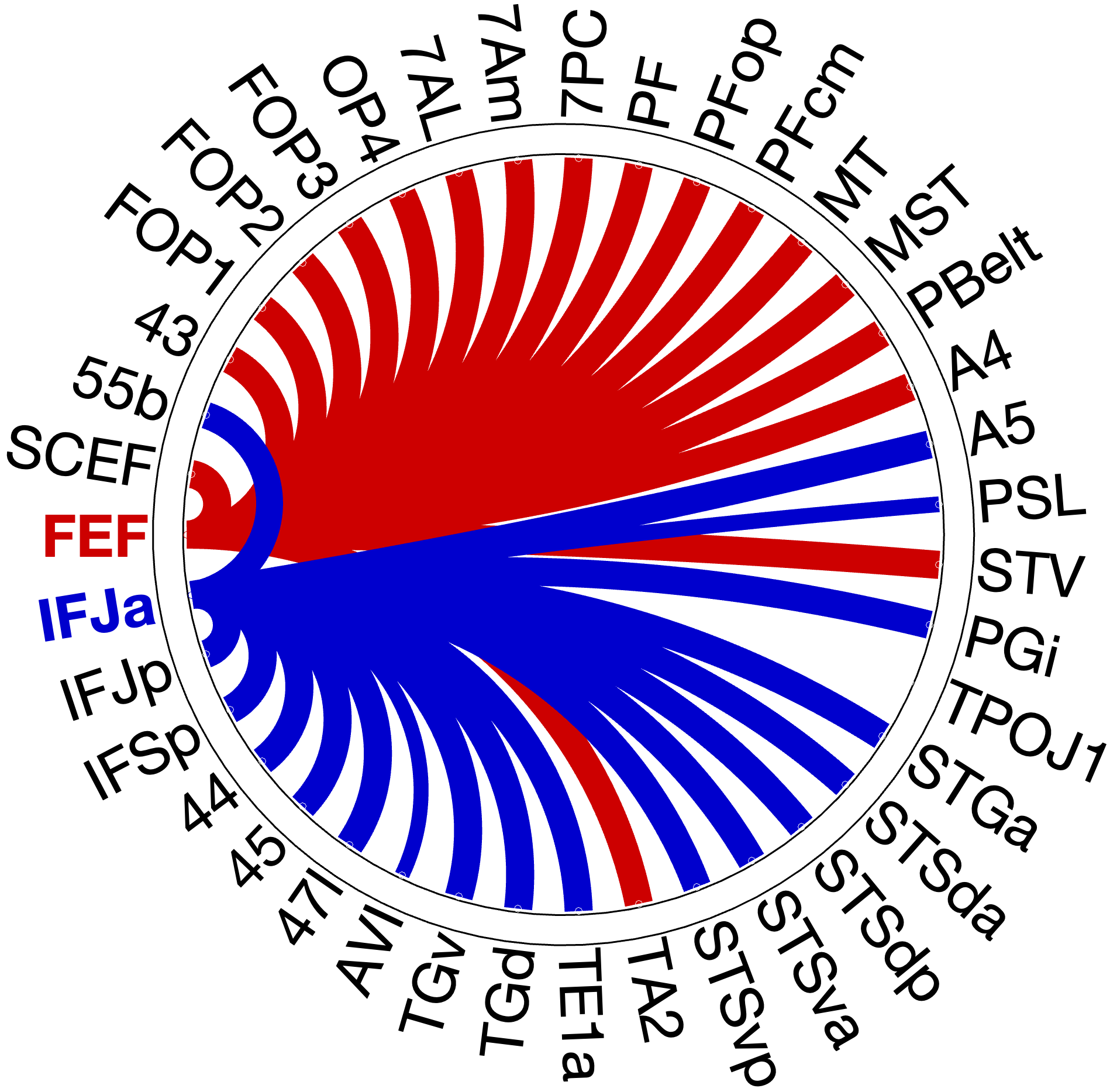

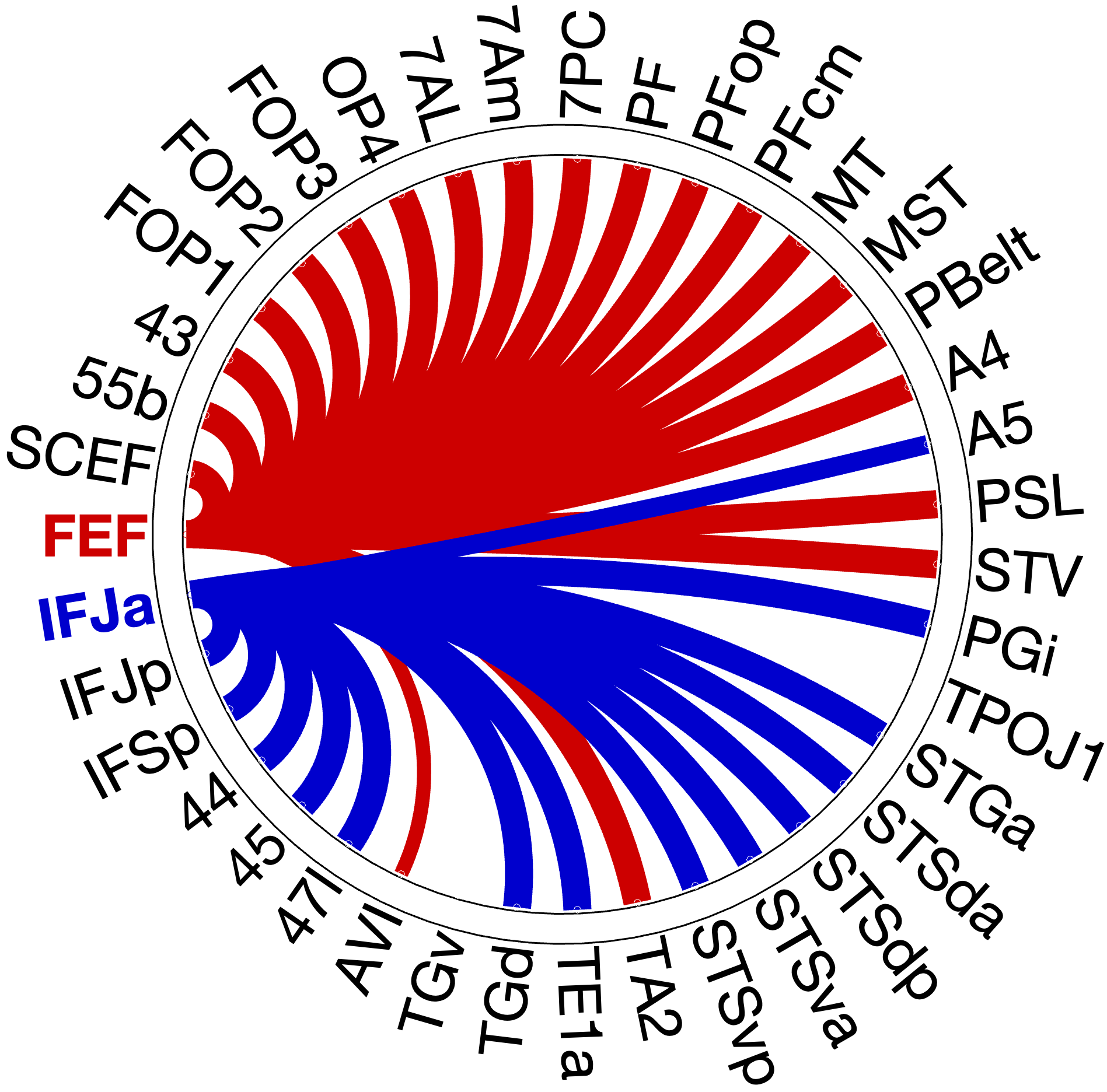

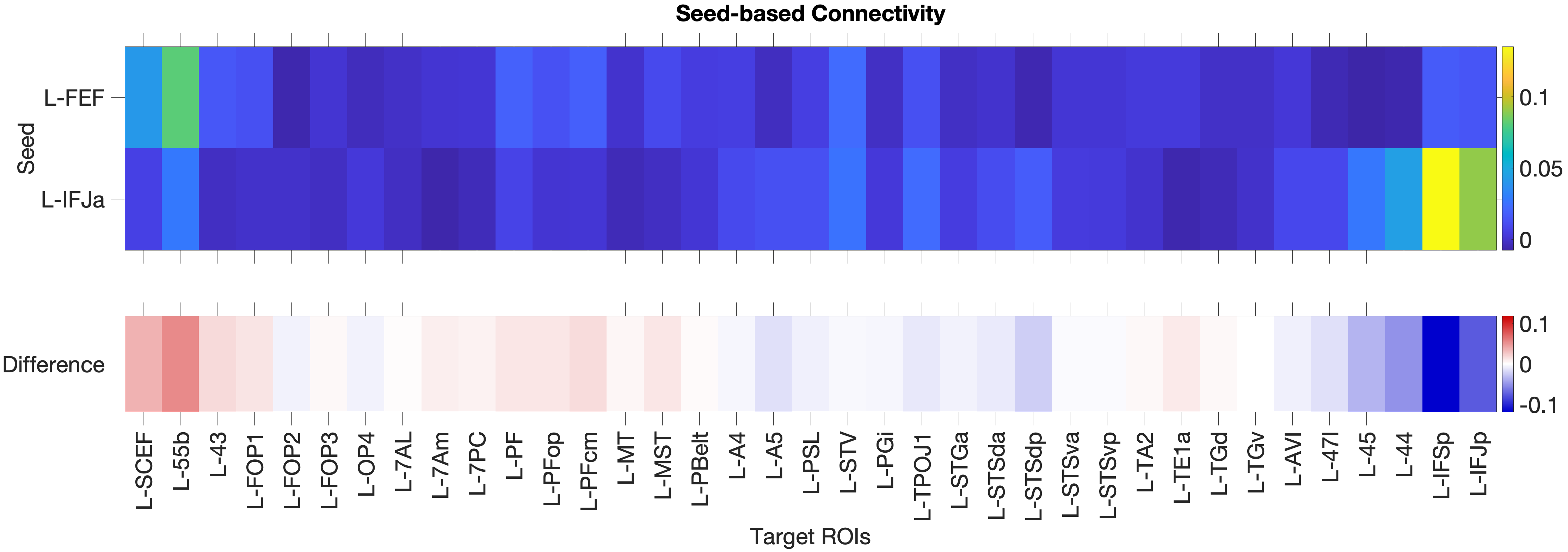

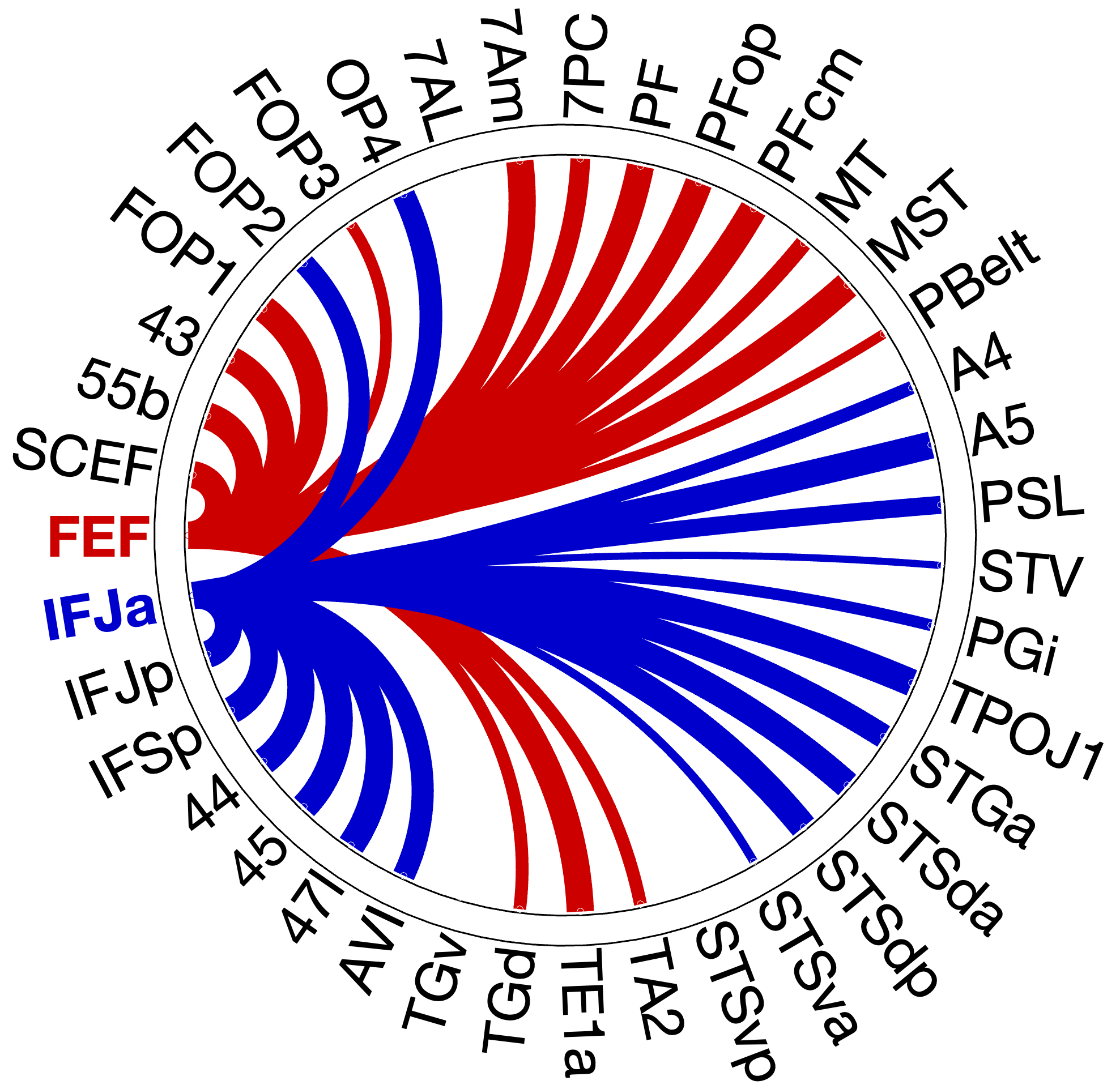

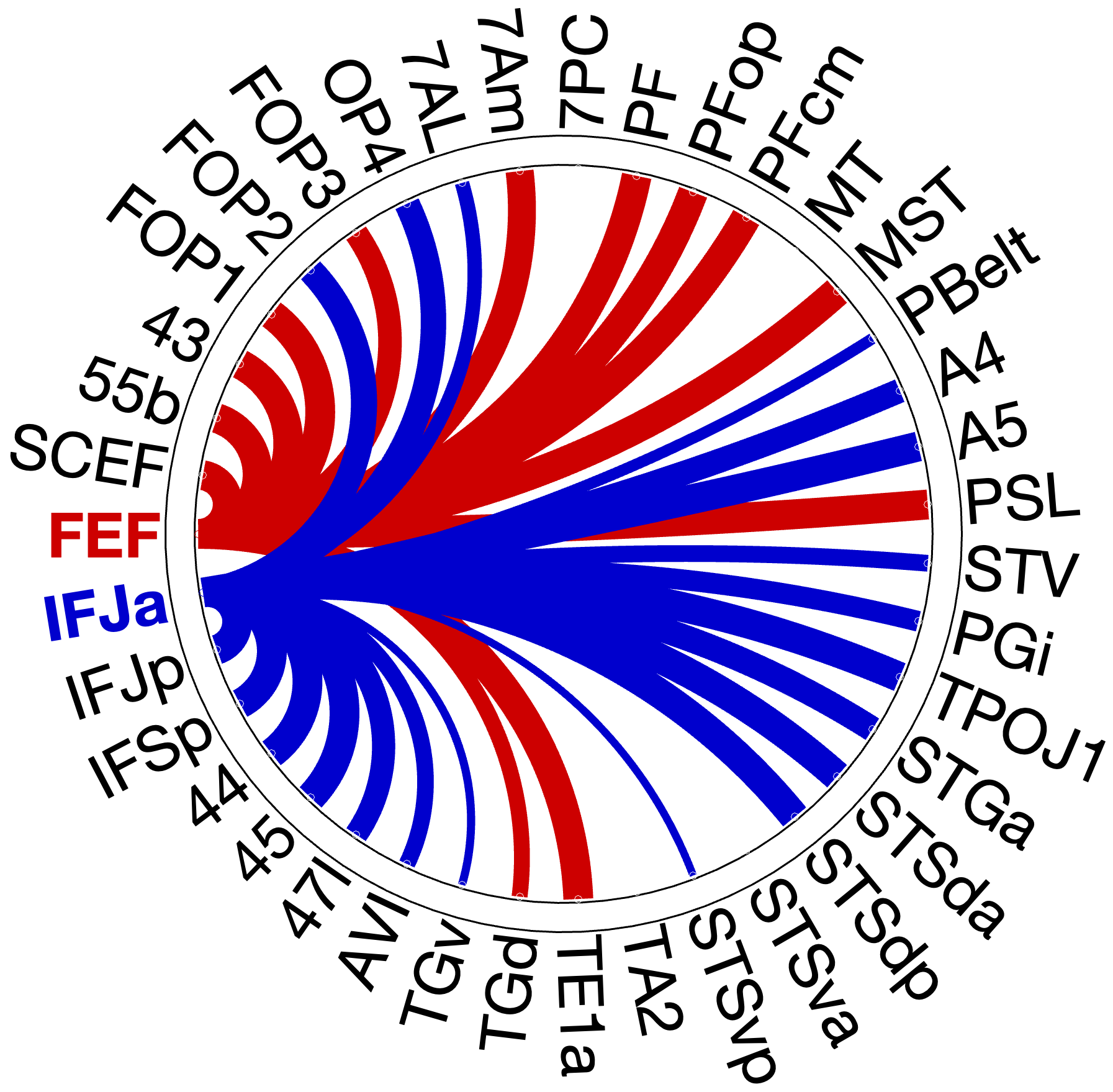

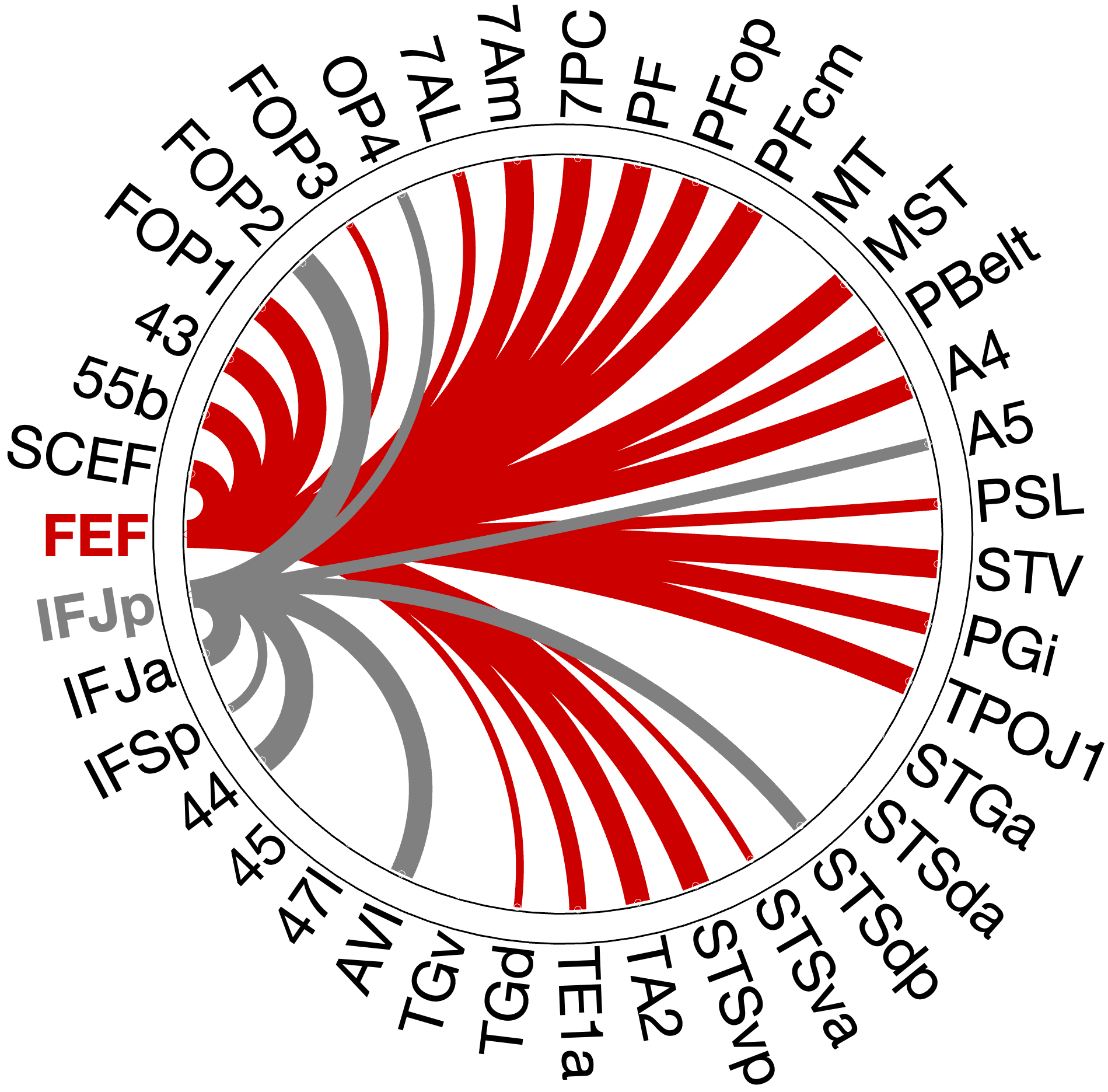

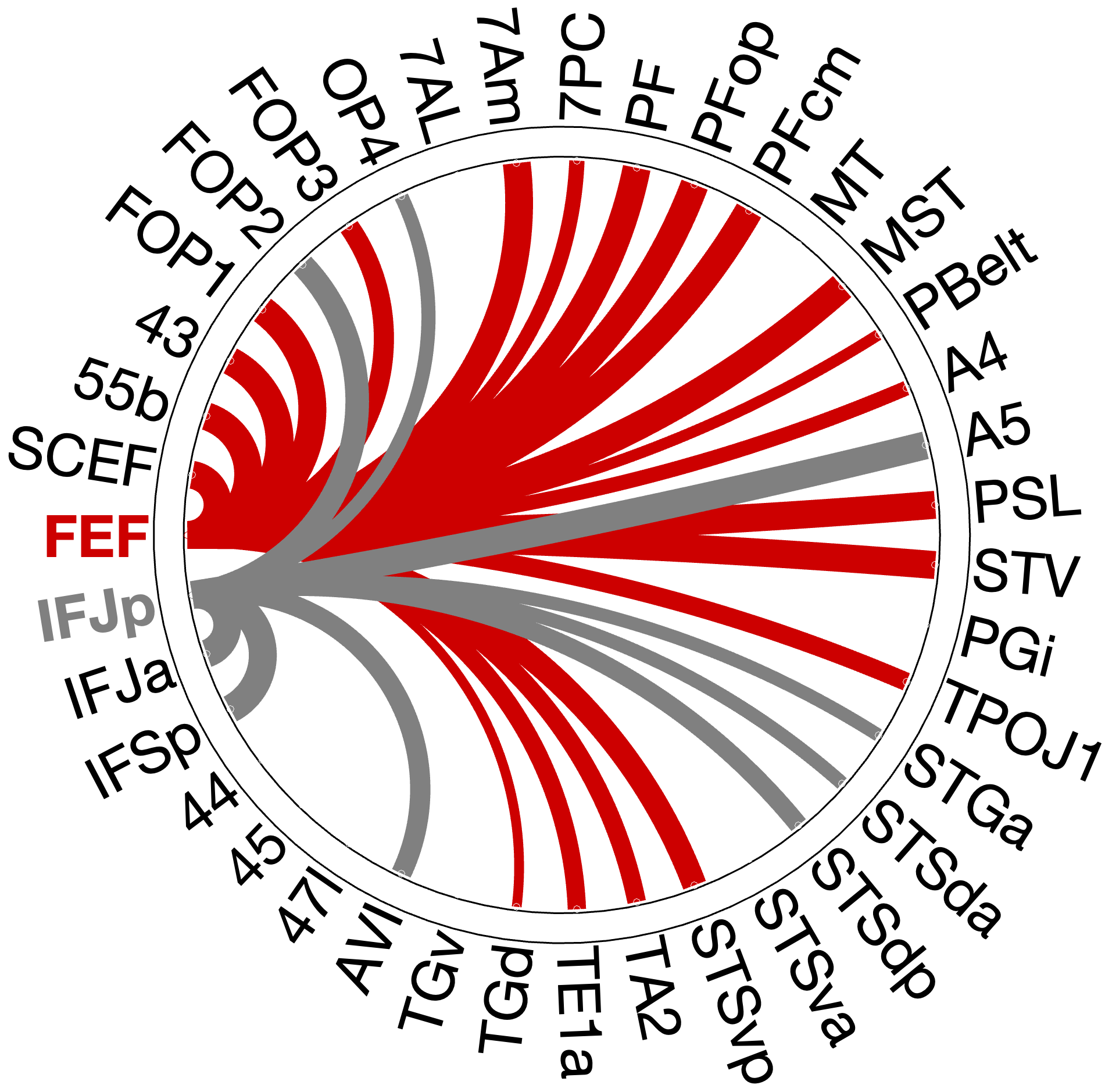

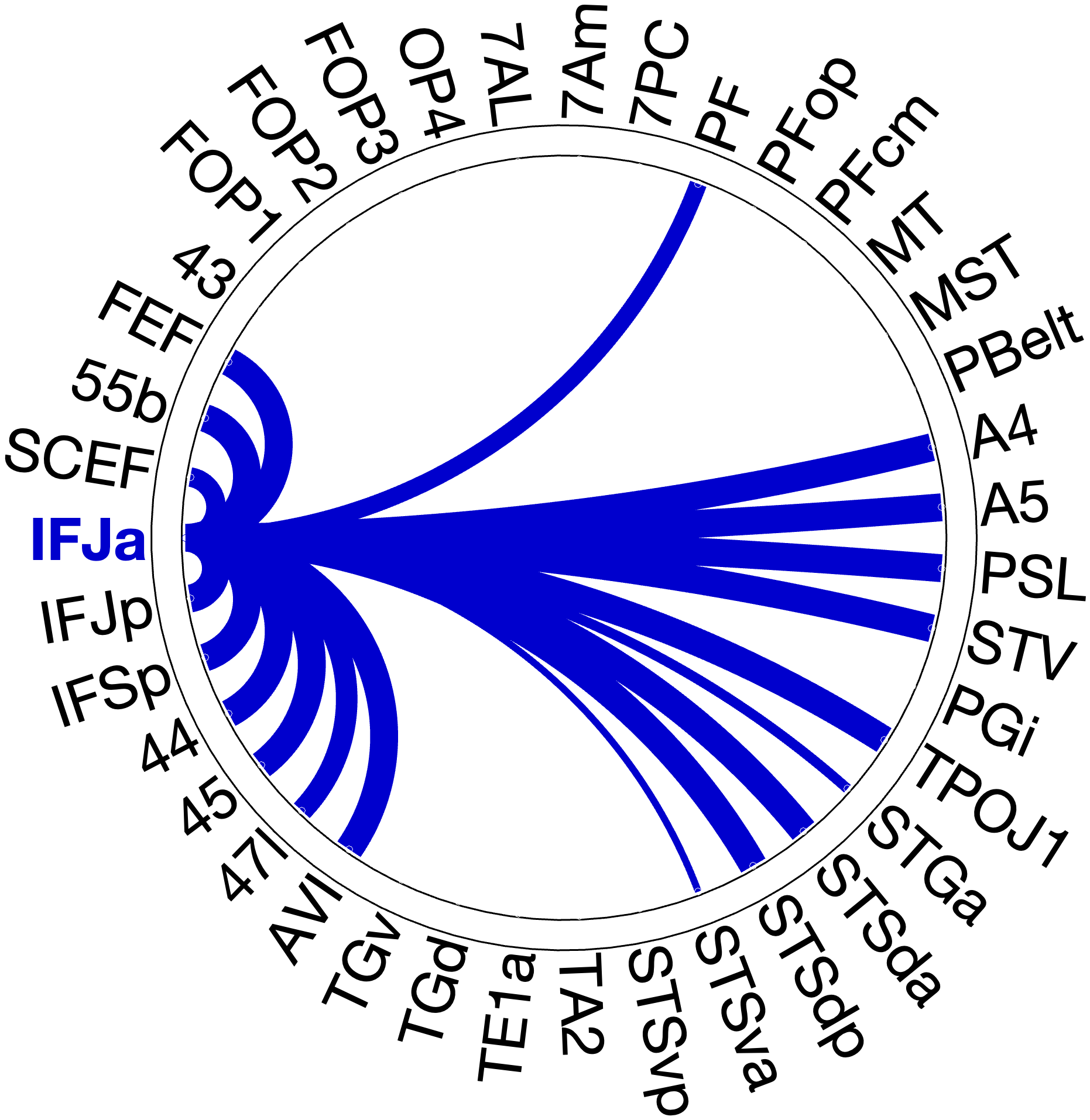

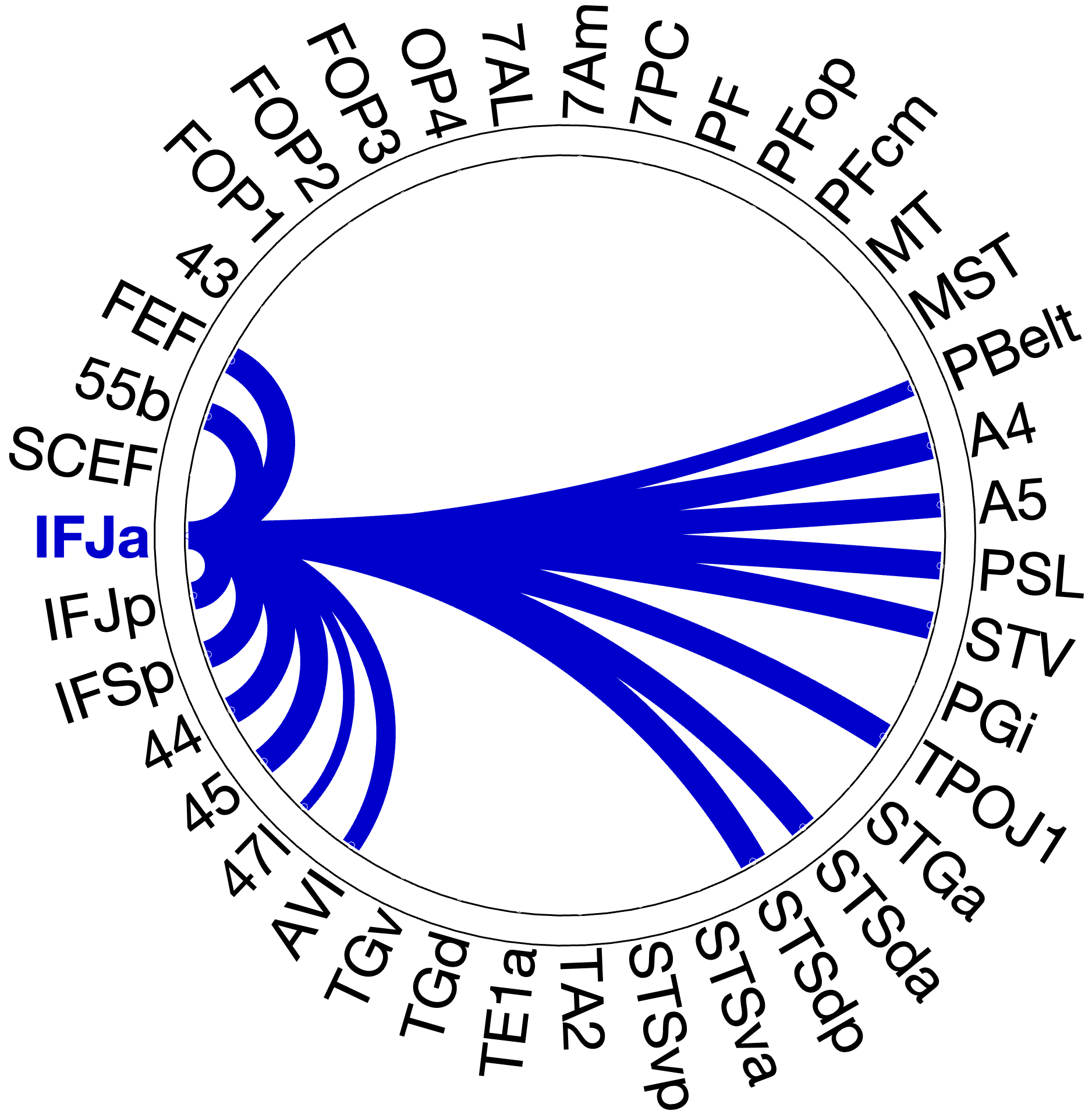

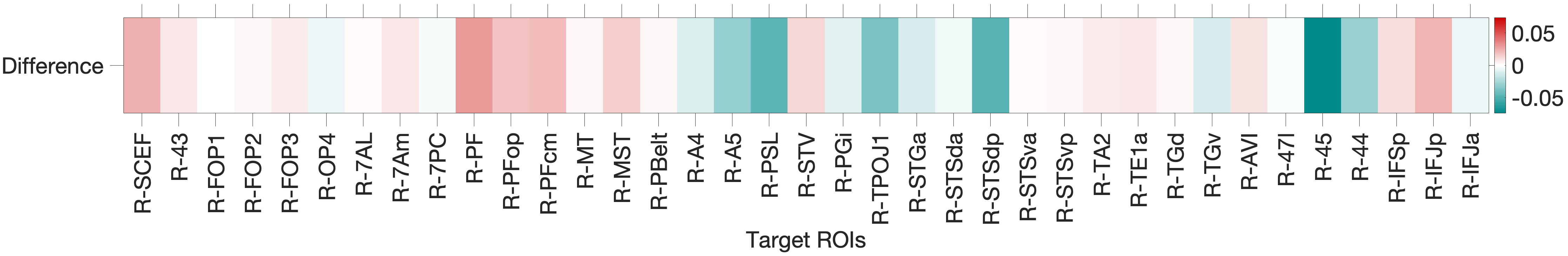

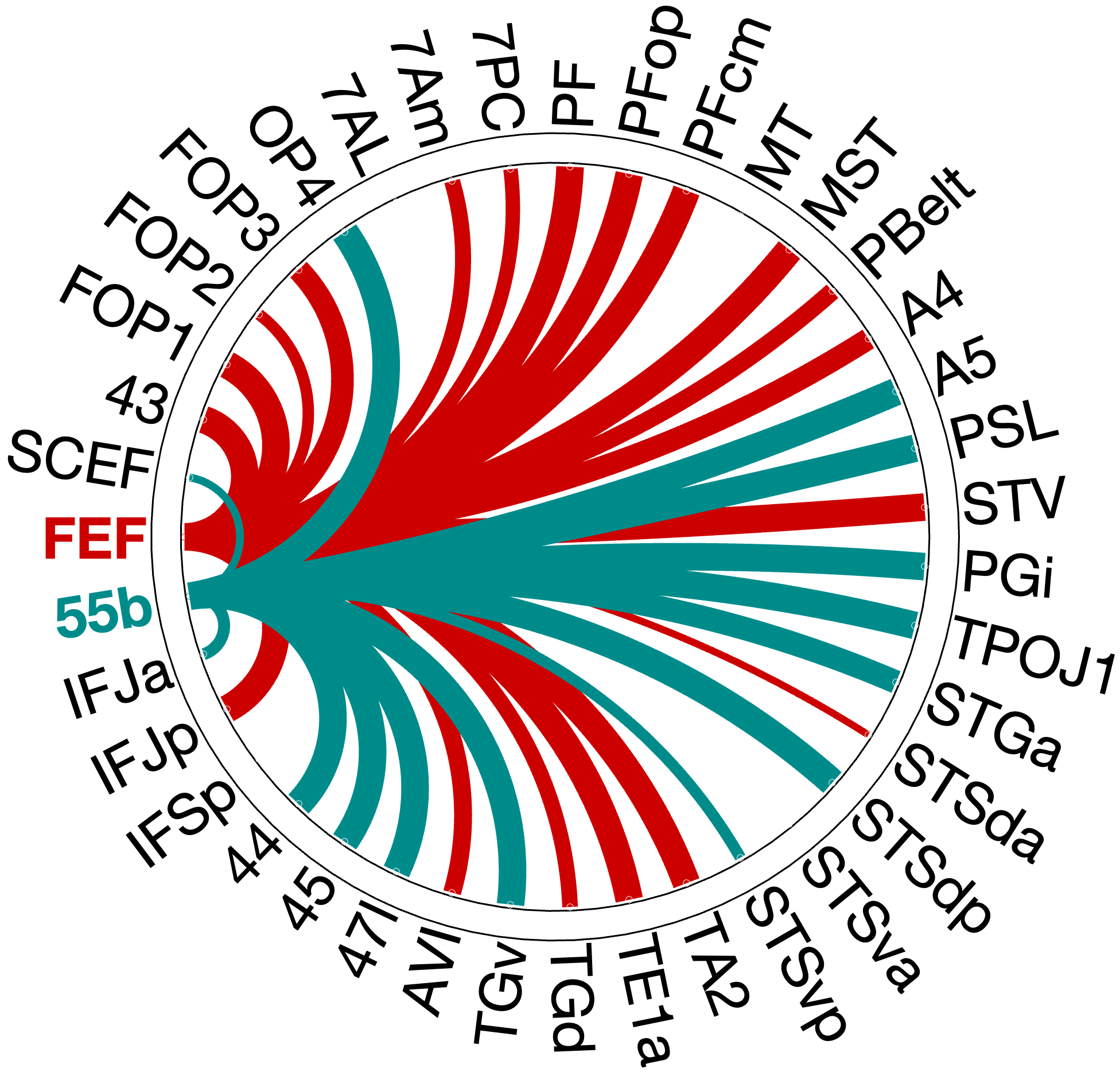

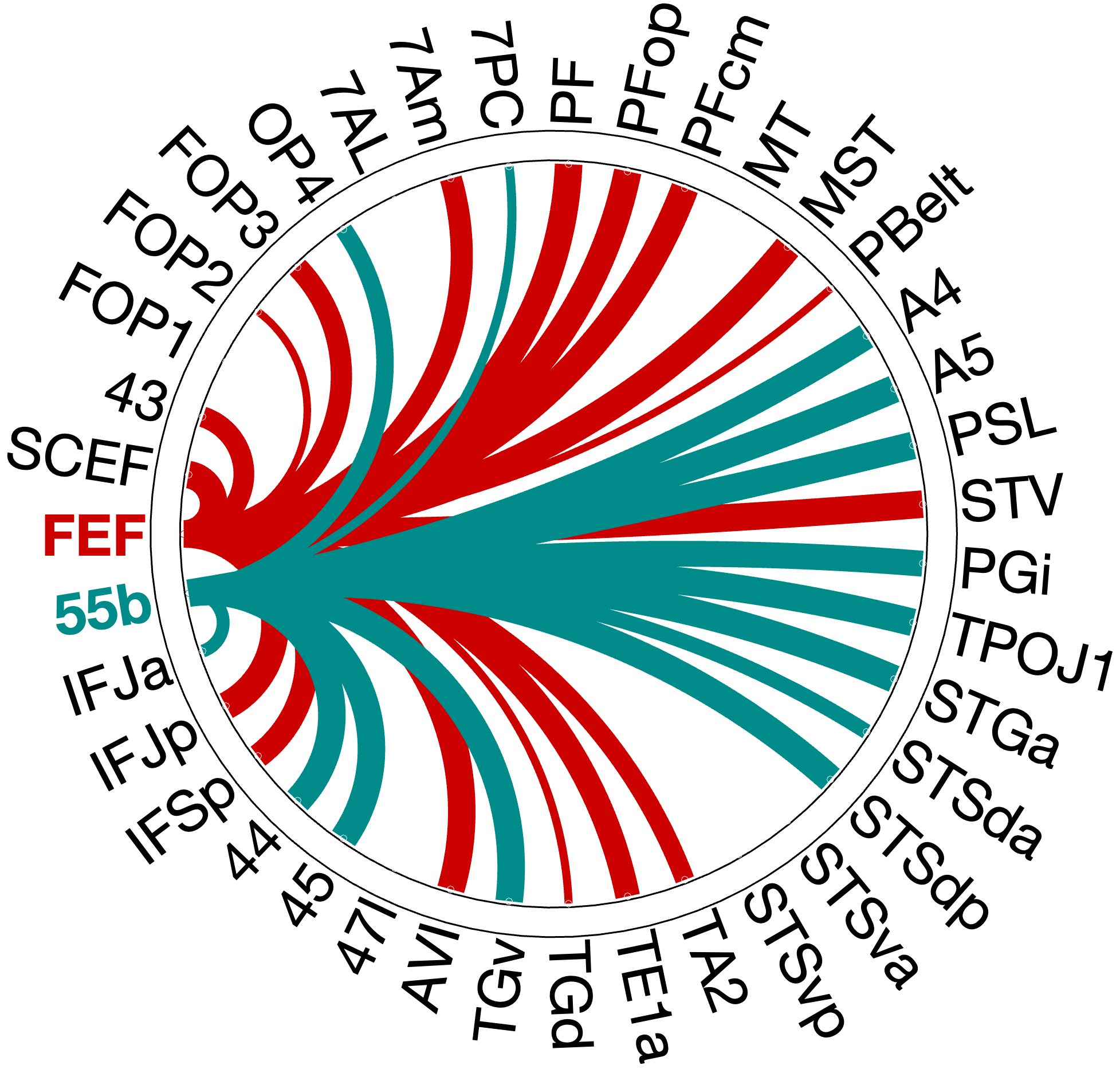

Figure 4.X: Circular connectivity diagrams comparing FEF (red) and IFJa (blue) full correlation with all auditory target ROIs, left (A) and right (B) hemisphere. The full correlation profiles reveal broad network-wide co-activation, including strong FEF coupling with superior parietal areas (7Am, 7PC, OP4) and IFJa coupling with temporal-semantic regions. These unpartialled patterns reflect general network membership and shared variance; partial correlation (Figure 4.X) isolates the direct functional pathways.

The functional connectivity analysis with full correlation reveals a clear double dissociation with FEF significantly coupling with spatial-parietal areas (full corr.: OP4 , ; 7Am , ; 7PC , ; RH) with exceptions of FEF coupling with TA2 (full corr.: , ; RH) stronger than IFJa and TA2 and 55b connecting stronger to IFJa than to FEF.

Then we applied partial correlation to partial out third party connectors to see a clear picture of the connectivity patterns within the auditory streams. The partial correlation analysis reveals that the most direct auditory connections to FEF are consistently rooted in the spatial orienting network. Both hemispheres show strong coupling with the inferior parietal cluster (PF, PFop, PFcm). However, the network shows a distinct right-hemispheric dominance in specific regions, consistent with widely accepted right-lateralisation framework for spatial auditory processing (Hickok & Poeppel, 2007). While the left hemisphere dominates in FOP3 (, ), 7Am (, ) and 7PC (, ), the right hemisphere demonstrates a clear dominance in PF (right , ; left , ) and shows partial correlation with the perisylvian language area (PSL, , ), suggesting a stronger specialised right-lateralized fronto-parietal integration for spatial auditory processing (Hickok & Poeppel 2007 - Nature).

Figure 4.1: Functional connectivity heatmap comparing FEF and IFJa partial correlation z-scores across 36 auditory target ROIs, left hemisphere. Warm colours (yellow-orange) indicate positive coupling; cool colours indicate near- zero or negative coupling. The opposing colour patterns between FEF and IFJa rows reveal the double dissociation: FEF couples preferentially with spatial-parietal and motor regions, while IFJa couples preferentially with temporal-semantic regions.

Figure 4.X: Circular connectivity diagrams comparing FEF (red) and IFJa (blue) partial correlation with all auditory target ROIs, left (A) and right (B) hemisphere. Each arc segment represents one ROI; line thickness and colour indicate the magnitude and direction of preferential coupling. Spatial-motor ROIs (inferior parietal, premotor) couple predominantly with FEF; temporal-semantic ROIs (STS, Broca areas) couple predominantly with IFJa.

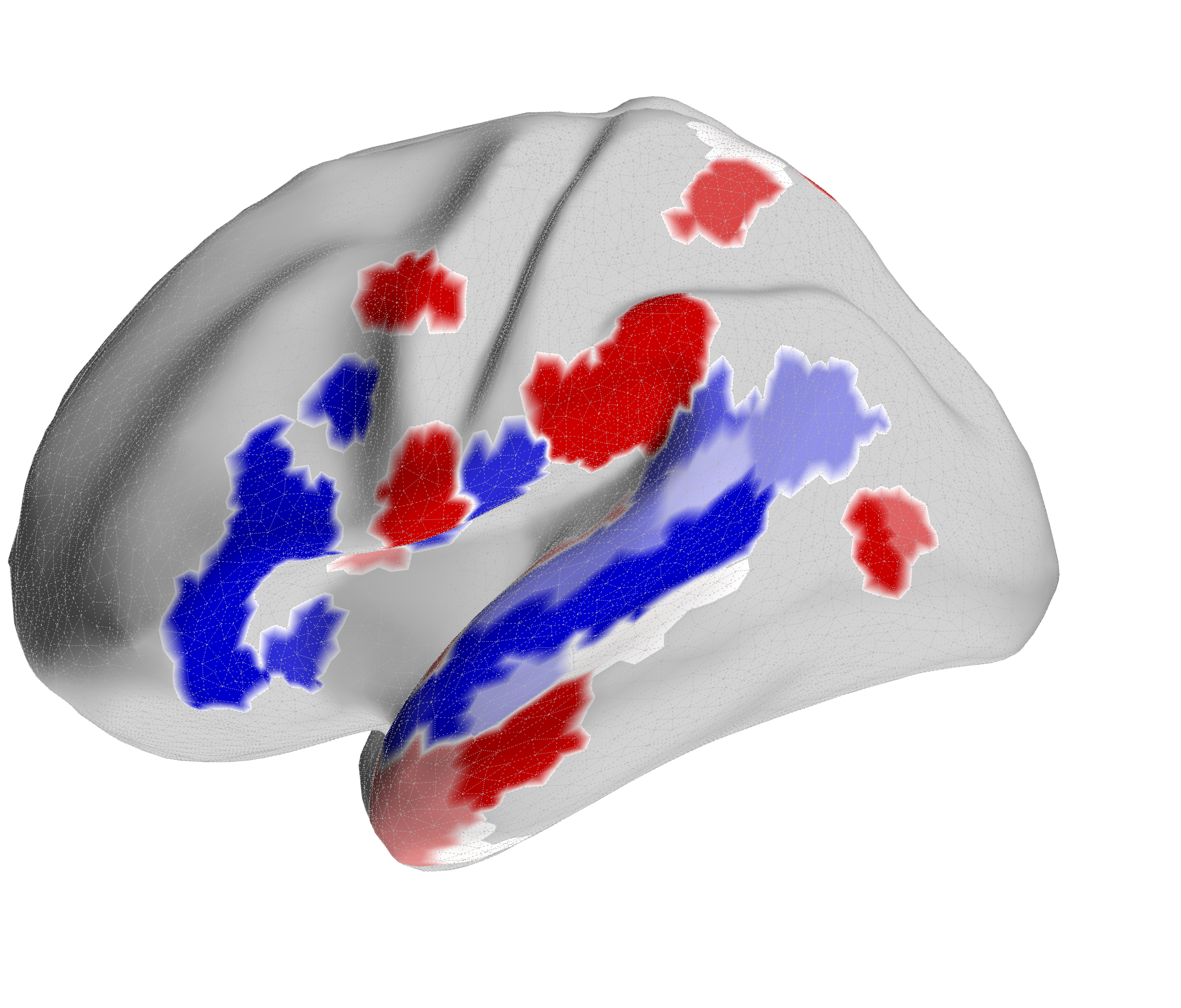

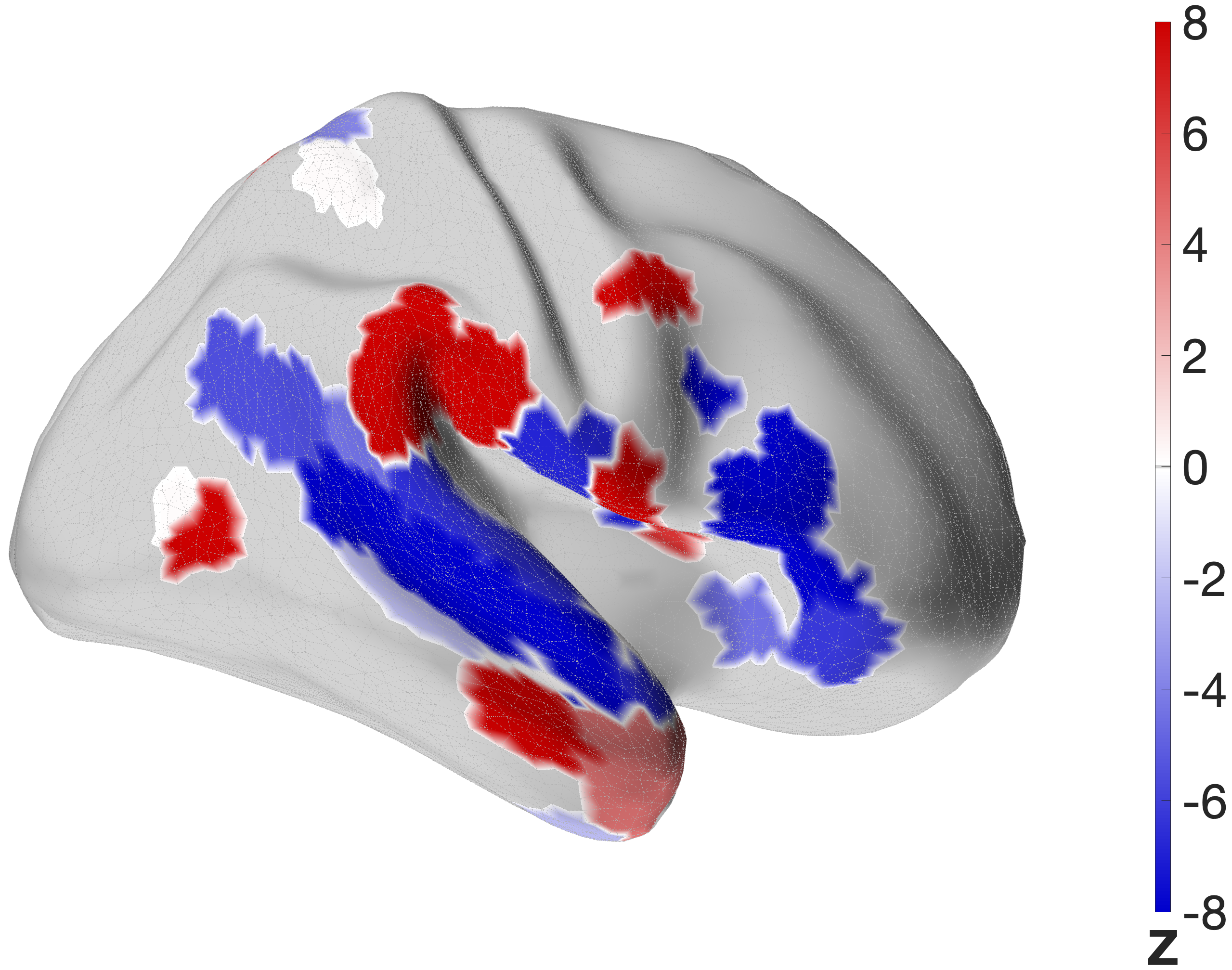

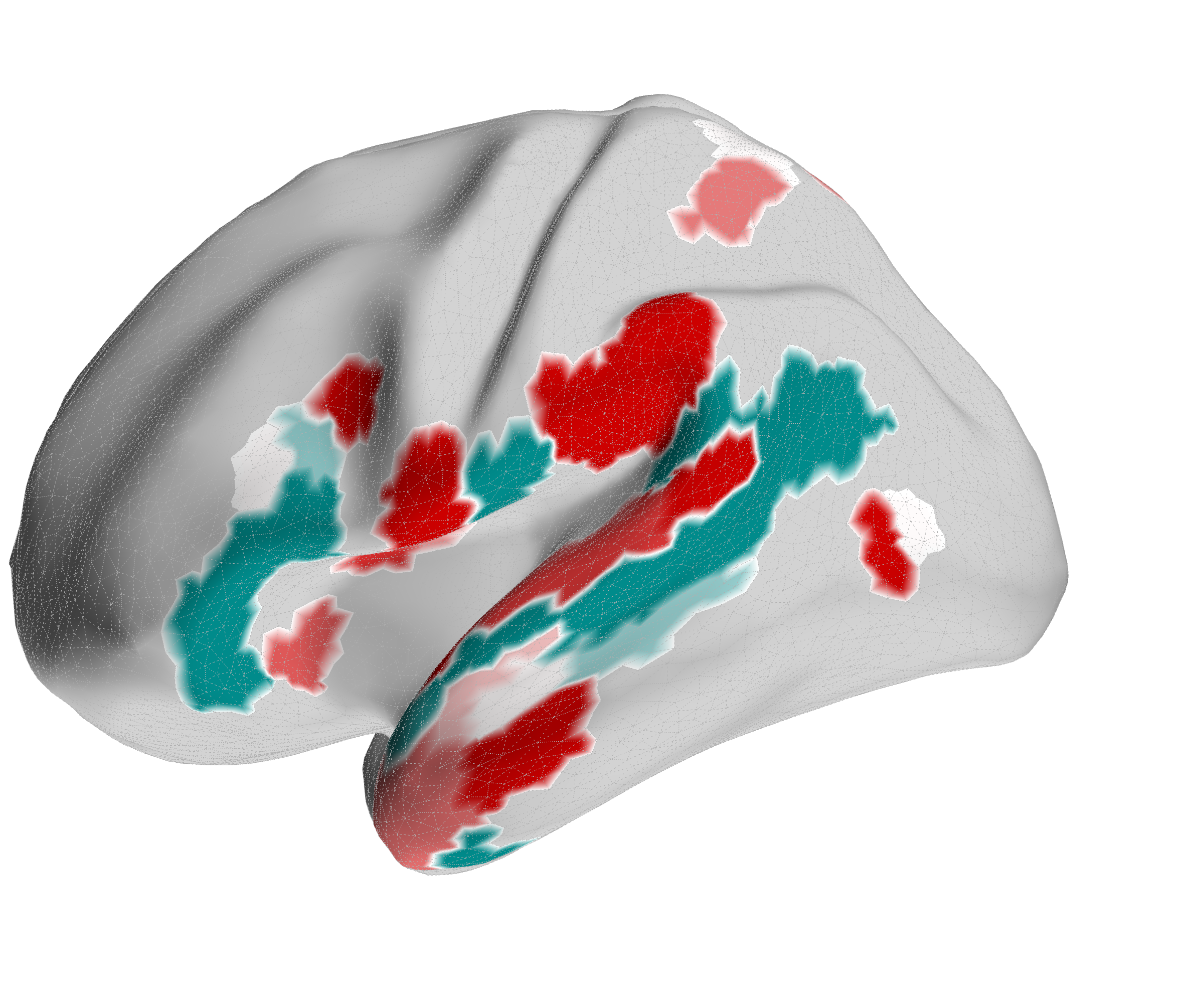

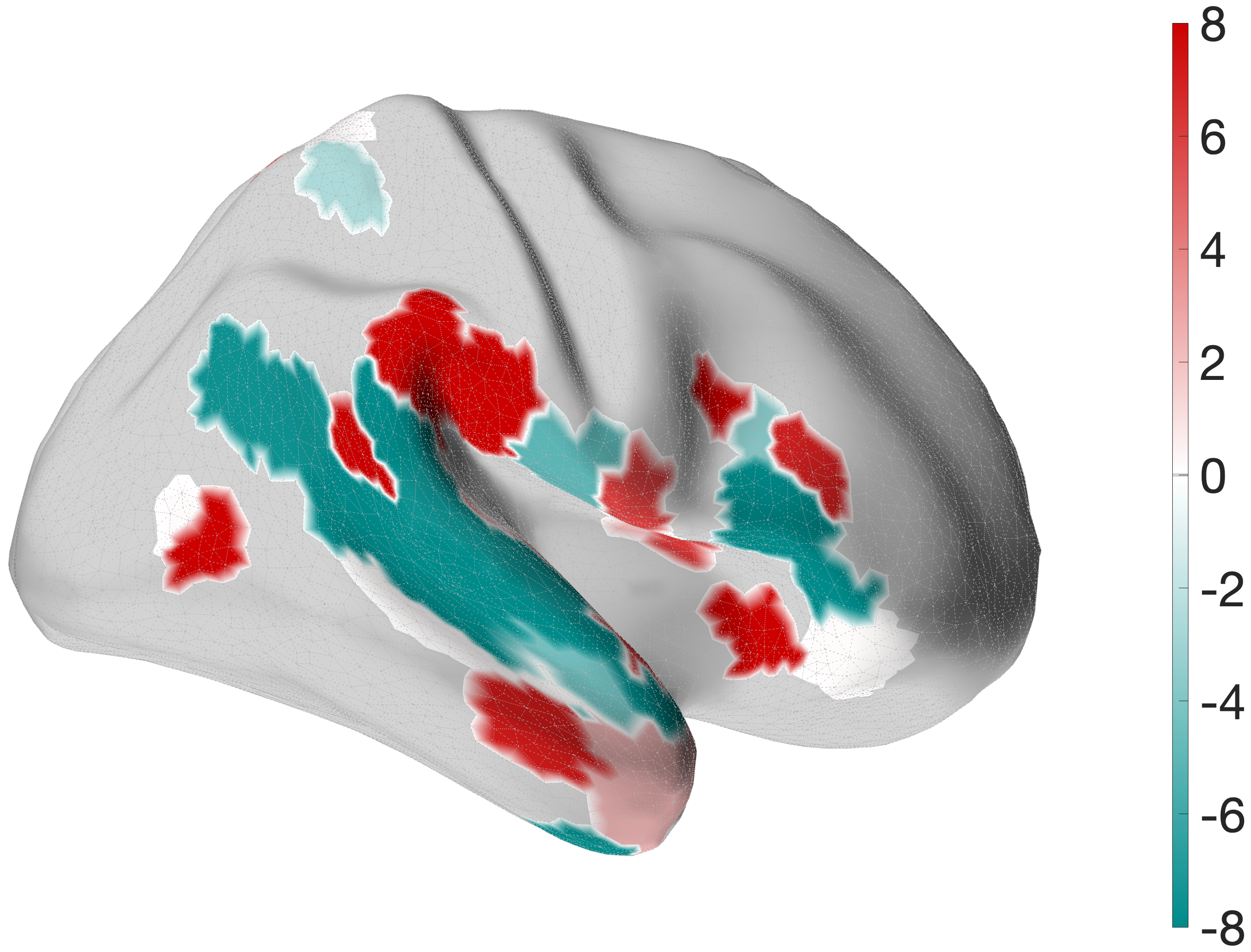

Figure 4.X: FEF versus IFJa partial correlation connectivity projected onto the cortical surface, left (A) and right (B) hemisphere. Red regions indicate preferential FEF coupling; blue regions indicate preferential IFJa coupling. The contrast between parietal and motor cortex (FEF, red) versus superior temporal sulcus and Broca’s area (IFJa, blue) visualises the double dissociation across both hemispheres.

- FEF: The FEF seed exhibits robust full correlation with the superior parietal lobe (7AL, 7Am, 7PC). This replicates the where-stream, similar to the visual stream Bedini & Baldauf (2021), together with auditory and motor regions such as A5, PBelt, FOP1, STV and PSL

- IFJa: In contrast, the IFJa showed strong coupling with the anterior language network (HCP language task)

4.1.2 Topographical Validation: IFJp Control Seed

To validate the top-down control over the auditory what-stream is driven by the anterior subdivision of IFJ, a control partial correlation model substituted IFJa for IFJp, its immediate neighbour.

The control analysis confirms the functional dissociation of where and what stream. The FEF maintains its robust connectivity to the dorsal auditory and motor network with 23 significant regions in the left and 20 in the right hemisphere.

Figure 4.X: Specificity control: FEF (red) versus IFJp (grey) partial correlation circular diagrams, left (A) and right (B) hemisphere. Substituting IFJa with its immediate posterior neighbour IFJp substantially reduces temporal coupling, confirming that top-down control over the auditory ‘what’-stream is specific to the anterior IFJ subdivision and does not generalise to adjacent prefrontal regions.

In the profound contrast substituting the what-hub with IFJp leads to a significant drop in the connectivity pattern of the semantic what-stream. The IFJp fails to communicate with the temporal lobe in comparison to the FEF.

This contrast provides the evidence that the prefrontal control hub over auditory identity processing is functionally exclusively anchored in the IFJa.

4.2 Testing the “Where” Stream (FEF Connectivity)

To evaluate the precise anatomical pathways of the top-down auditory attention network, the functional connectivity was assessed using full and partial correlation, revealing a clear spatial-dorsal pattern that places the FEF within its established framework.

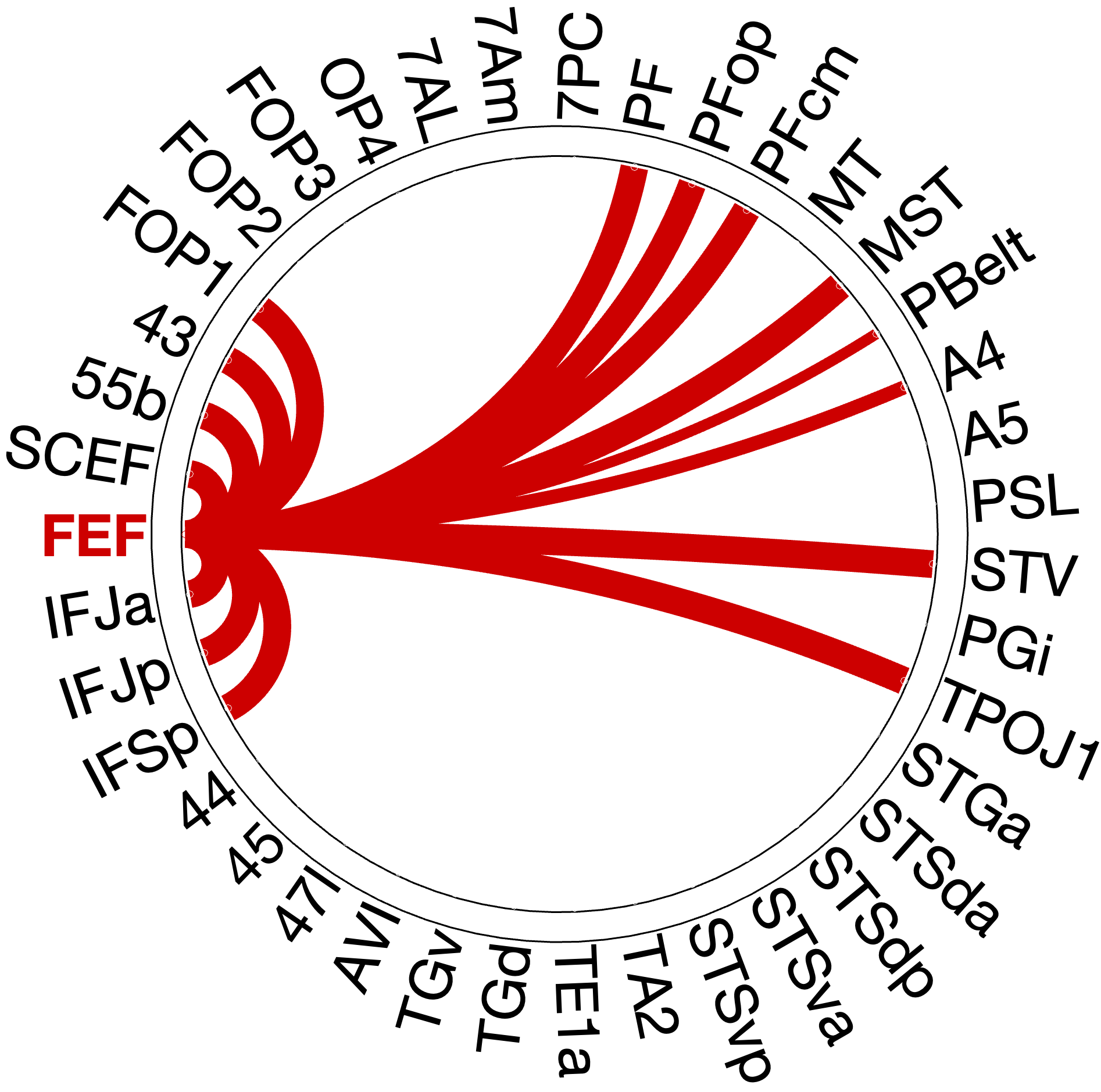

Figure 4.5: FEF single-seed partial correlation connectivity profile, left (A) and right (B) hemisphere. Significant connections are concentrated in inferior parietal (PF, PFop, PFcm), premotor-opercular (SCEF, 55b, FOP1), and motion- sensitive (MST) regions. This spatial-motor distribution is consistent with a dorsal ‘where’-stream profile under FEF top-down control.

4.2.1 Inferior Parietal Connection

In visual stream models, FEF couples strongly with the superior parietal lobule areas 7PC, 7AM and 7AL. However, partial correlation reveals a strong contrast, not showing significant coupling of FEF to superior parietal regions 7AL, 7AM and 7PC, although still coupling bilaterally with inferior parietal regions of PF (left , ; right , ), PFop and PFcm.

4.2.2 Premotor cluster (“How” Stream)

The strongest connections with FEF are the premotor and frontal opercular areas. The SCEF (left , ; right , ) and area 55b (left , ; right , ) form a bilateral motor-premotor cluster, along with FOP1 and area 43. Together these areas might form the “how” stream, proposed by Hickok & Poeppel 2007 - Nature, connecting spatial information with motor action plans.

4.2.3 Resolving Superior Parietal and Inferior Temporal Connections

A crucial topological misconception emerges when comparing full and partial correlation profiles. In the full correlation, the FEF exhibits strong coupling with superior parietal areas (e.g., 7Am: left ; 7PC: left ). However, in the partial correlation model, these connections vanish entirely. This demonstrates that FEF does not maintain full spatial connections with superior parietal lobule when partialling out auditory ROIs. For auditory-spatial information, FEF mainly recruits Inferior Parietal Lobule (PF/PFop) and motor-sensitive areas. Furthermore, temporal areas that appear significant in Z-scores in figure FEF vs IFJa part corr.png (TE1a, TA2, TGd) show no substantial effect size (), suggesting that the FEF remains functionally decoupled from the ventral “what” stream’s temporal regions.

4.2.4 Motion and Supramodal Integration

The FEF maintains direct functional access to spatial motion tracking. Partial correlation reveals bilateral coupling with the medial superior temporal area (MST: left , ; right , ). Additionally, multimodal convergence hubs STV (left , ; right , ) and TPOJ1 (left , ; right , ) show significant direct integration with FEF in both hemispheres (Rolls et al. (2023) - Cerebral Cortex).

The right hemisphere reveals an exclusive direct connection to the PSL (, ), which is entirely absent in the left hemisphere’s partial profile, indicating a right-lateralized pathway for abstract spatial-auditory processing (Rolls (2022) - NeuroImage).

4.3 Testing the “What” Stream (IFJa Connectivity)

To map the precise anatomical pathways of the top-down auditory identity network, the functional connectivity pattern of IFJa was evaluated using single-seed partial correlation. The approach filters out shared variance, showing direct functional coupling of the ventral what-stream to the IFJa.

4.3.1 Temporal-Semantic connections

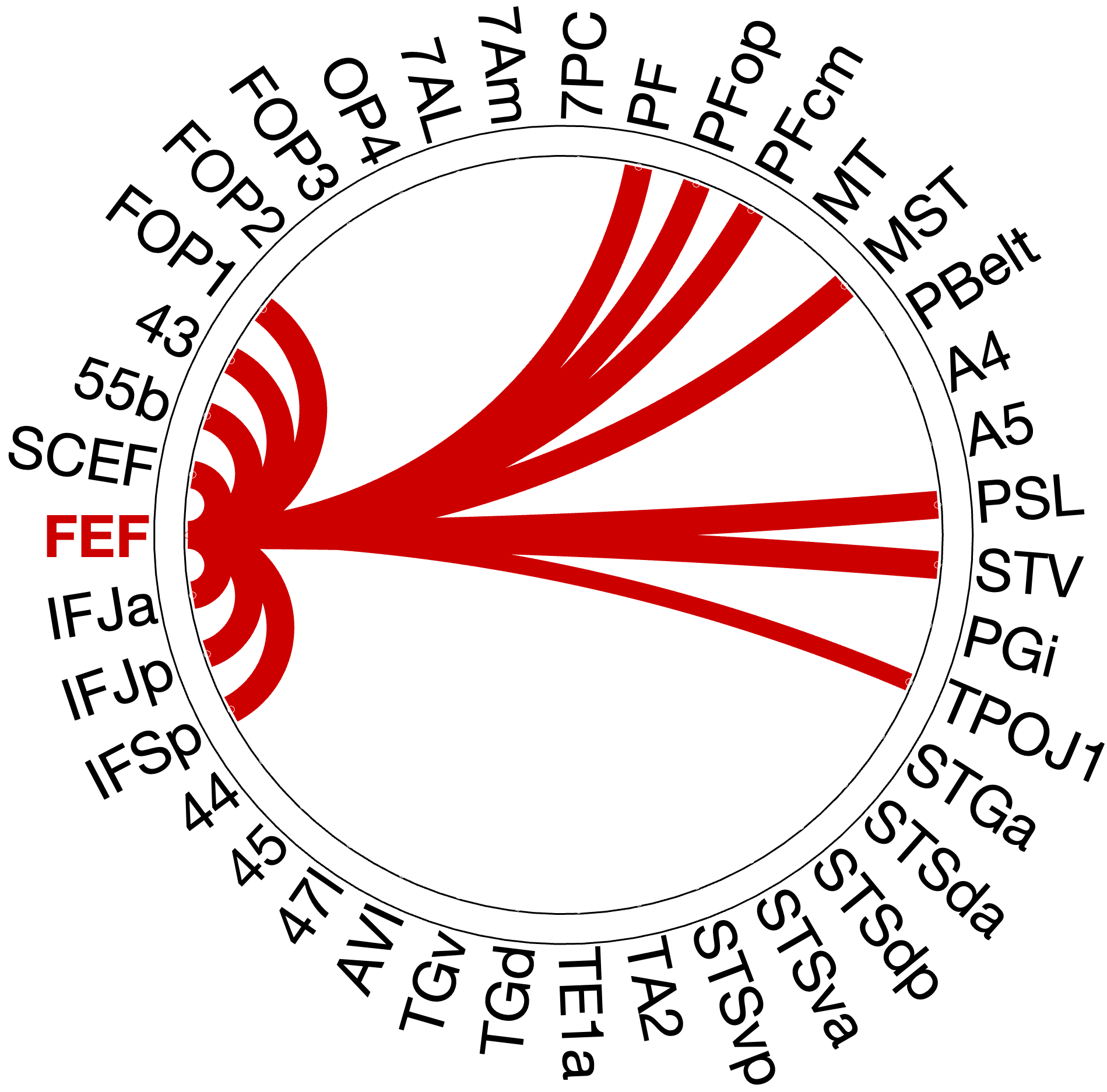

The partial correlation analysis confirms that the IFJa robustly couples areas in the superior temporal sulcus (e.g. STSdp: left , ; right , and STSda: left , ; right , ). Additionally, the left hemisphere xintegrates STGa and STSva. This direct prefrontal-temporal pathway validates the IFJa as a top-down controller for decoding auditory object identity.

Figure 4.X: IFJa single-seed partial correlation connectivity profile, left (A) and right (B) hemisphere. Significant connections are concentrated in superior temporal sulcus regions (STSda, STSdp), Broca’s complex (Area 44, Area 45), and early auditory association areas (A4, A5). This temporal-semantic distribution confirms IFJa as the prefrontal top-down controller for the auditory ‘what’-stream.

4.3.2. Prefrontal Language Connections

Within the prefrontal cortex, the IFJa serves as a master hub for the semantic language network. IFJa bilaterally couples with IFG subregions 44 (left , ; right , ), 45 (left , ; right , ), and 47l. Additionally, IFJa couples strongly with the dorsal language hub 55b (left , ; right , ) and FEF (left , ; right , ). This positions IFJa as a main connector and attention hub for the language network.

4.3.3. Gateways and Convergence

Partial correlation reveals bilateral coupling with auditory areas A4 (left ; right ) and A5 (left ; right ), along with right lateralized PBelt connections (). Additionally, robust multimodal convergence areas like STV (left ; right ) and TPOJ1 are deeply integrated into the IFJa network, functioning as a combination of auditory identity with other sensory modalities. Additionally, PSL couples bilaterally with IFJa in both hemispheres (, right: , left: 9.65)

4.3.4 The Spatial Dissociation

A fundamental topographical dissociation emerges when contrasting IFJa’s full and partial correlation patterns, especially in the parietal lobe. The results show a strong correlation pattern across the motor and spatial areas (55b, OP4, MT/MST), but dissecting the connectivity pattern with partial correlation reveals instead sparse, language-driven coupling. This confirms the IFJ’s role as a prefrontal hub for semantic, temporal pathways, segregating it from the spatial “where”-stream.

4.4 Resolving Ambiguities

During the analysis and literature review, areas A4, A5, PBelt, PSL and STV demonstrated an ambiguous connectivity pattern. This chapter will dissolve ambiguities found in the literature and results of the RSFC analysis.

A4 and A5. We placed areas A4 in the ‘where’-stream and A5 in the ‘what’-stream (Section 3.2.5), given the conflicting evidence in the literature (Rolls et al. (2023) - Cerebral Cortex, Glasser et al. (2016) - Nature). Partial correlation analysis resolves this conflict empirically by showing robust and bilateral connectivity of both areas A4 and A5 with IFJa (A4: left , ; right , ; A5: left , ; right , ), while showing no meaningful coupling with FEF. The only exception is a weak, left-lateralized A4-FEF connection (, ) in the single seed analysis with FEF. This pattern positions both A4 and A5 as ‘what’-stream gateways under potential IFJa top-down control.

PBelt. PBelt was grouped alongside A4 as a potential dorsal gateway in the pre-analysis classification (Section 3.2.5). In the single-seed IFJa analysis, PBelt shows only weak coupling (right: , ). Partial correlation reduces this further to near-equivalent values for both prefrontal seeds (IFJa: right , ; FEF: left , ). The absence of a dominant prefrontal partner, combined with near-zero mean connectivity, indicates that PBelt’s functional coupling is insufficient for reliable stream assignment.

PSL. Rolls et al. (2023) - Cerebral Cortex classify PSL within the ventral semantic network, while Dureux (2024) reports complete acoustic unresponsiveness across all stimulus categories. Our analysis reveals a strongly asymmetric connectivity profile. The IFJa single-seed analysis shows bilateral PSL-IFJa coupling (left , right , ). The FEF single-seed analysis reveals a right-lateralized PSL-FEF connection (, ), entirely absent in the left hemisphere. No other ROI in this analysis displays this pattern of bilateral ‘what’-stream coupling alongside exclusive right-lateralized ‘where’-stream coupling.

STV. STV was classified as part of the ventral language network by Rolls et al. (2023) - Cerebral Cortex and couples directly with both prefrontal seeds in separate single-seed analyses. In full correlation, STV couples with FEF stronger in both hempsheres, but when it comes to partial correlation, IFJa wins in both. In the IFJa analysis, STV couples bilaterally with IFJa (left , ; right , ), after partialling out FEF. In the FEF analysis, STV couples bilaterally with FEF (left , ; right , ), after partialling out IFJa. In both cases, the coupling is direct, not mediated through indirect connections.

4.5 Functional Roles of Adjacent Areas

4.5.3 Specificity Validation: FEF vs. 55b

A parallel validation compared FEF with its immediate premotor neighbour, area 55b, using partial correlation to rule out nonspecific regional signals. Despite their direct anatomical neighbourhood, the two seed regions exhibit clearly dissociable connectivity patterns. In both hemispheres, the FEF maintains dominant coupling with parietal and motor areas (PF: left , ; right , ; PFcm: left , ; MST: left , ). In contrast, area 55b dominates language-related connectivity, with the highest mean connectivity values in the entire analysis: PSL (left , ; right , ), STSdp (left , ; right , ), and Broca’s areas 44 (left , ; right , ) and 45 (left , ; right , ). The FEF profile shows no equivalent coupling with temporal or language regions in either hemisphere.

Figure 4.X: Functional connectivity heatmap comparing FEF and area 55b partial corre- lation z-scores across all auditory target ROIs. Despite anatomical adjacency, FEF (upper rows) couples preferentially with parietal and motor regions, while 55b (lower rows) couples predominantly with temporal, opercular, and language areas. This dissociation confirms that the spatial connectivity profile is specific to the FEF and does not reflect a general dorso-prefrontal signal.

Figure 4.X: FEF versus 55b partial correlation circular diagrams, left (A) and right (B) hemisphere. Area 55b (green) exhibits dominant connectivity to superior temporal regions, Broca’s complex (Area 44, Area 45), and early auditory areas (A4, A5), while FEF (red) emphasises inferior parietal and premotor areas. The opposing profiles confirm that 55b functions as a language relay rather than a spatial control hub.

Figure 4.X: FEF versus 55b partial correlation projected onto the cortical surface, left (A) and right (B) hemisphere. Red shading indicates preferential FEF coupling; green shading indicates preferential 55b coupling. The spatial contrast between parietal (FEF-dominant) and temporal (55b-dominant) cortex visually confirms the functional dissociation between the spatial control hub and the language relay.

Interestingly, 55b shows no significant coupling to spatial areas in the parietal lobe, but maintains strong direct coupling with auditory association areas A5 (left: , right ) and A4 (right: ). The latter connections are mostly absent in the FEF profile (only in the left hemisphere to A4, , ).

4.5.4 Results of the Broca-Seed Validation

Next, we evaluate the proposed functional subdivision of Broca’s area within the auditory dual-stream architecture (Rolls et al. (2023) - Cerebral Cortex). The connectivity patterns of Areas 44 and 45 were independently assessed using partial correlation to isolate direct functional pathways.

The comparative analysis reveals that Area 45 couples more strongly with semantic temporal areas than Area 44; in the right hemisphere, this extends to a stronger coupling with 55b. Area 44, by contrast, shows stronger coupling with 55b in the left hemisphere.

Area 45: The Ventral Anchor

Area 45 exhibits broad integration into the ventral what-stream, with its connectivity profile extending extensively into the temporal lobe (e.g., STSdp: left , ; right , ) and demonstrating strong coupling with PSL (left , ; right , ). Although the visualisation may suggest coupling with superior parietal (7AL, 7Am, 7PC) and premotor areas (FOP3), the corresponding mean connectivity values are near-zero or negative (), indicating that these statistically detected associations do not reflect meaningful functional coupling strength. This robust temporal-prefrontal coupling firmly anchors Area 45 as a core semantic node within the Broca complex. In contrast to Area 44, Area 45 alone shows direct coupling with early auditory areas A4 and A5 (e.g., A5: left , ), indicating that the gateway between early acoustic processing and the Broca complex runs specifically through Area 45.

Area 44: The Motor-Articulatory Interface

Area 44 reveals a fundamentally different architecture. Rather than coupling with the temporal-semantic network, its significant connectivity is concentrated in the anterior ventral insula (AVI: left , ; right , ), the premotor language node 55b (left , ), and IFJa (left , ; right , ). Additionally, Area 44 shows a direct coupling with FEF (right: , ), providing a direct link to the dorsal spatial control hub. Regions such as MT, MST, and PFop show near-zero or negative partial correlation values (), confirming the absence of integration with parietal and motion-sensitive areas. This suggests that 44 plays a role in connecting semantics from ‘what’-stream to articulatory output via 55b.

4.6 Behavioural Prediction

To validate the functional relevance of the resting-state networks identified in sections 4.2–4.5, we assessed whether individual differences in RSFC could predict performance on two in-scanner fMRI tasks and one out-of-scanner behavioural measure from the HCP battery.

All analyses show the ROIs of the right hemisphere, since the left hemisphere did not show significant results, following a paradigm considering the design of the tasks. The omission of significance in the left hemisphere is discussed in 5.5.

4.6.1 “What” Stream: Predicting Semantic Processing Speed

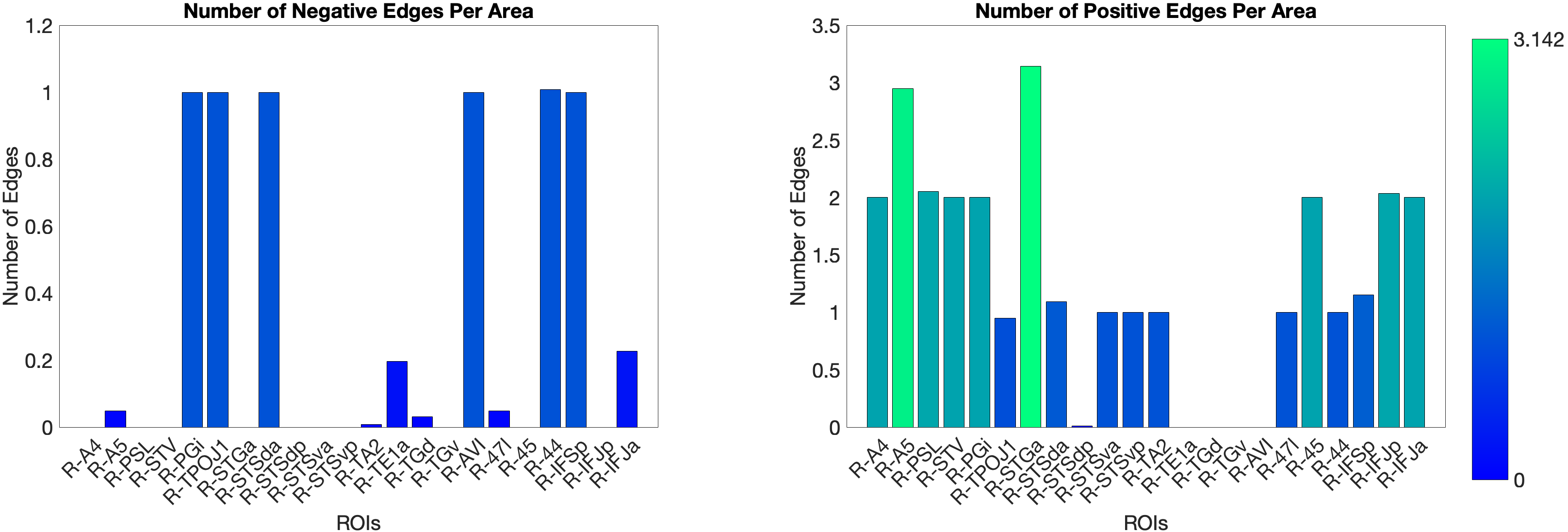

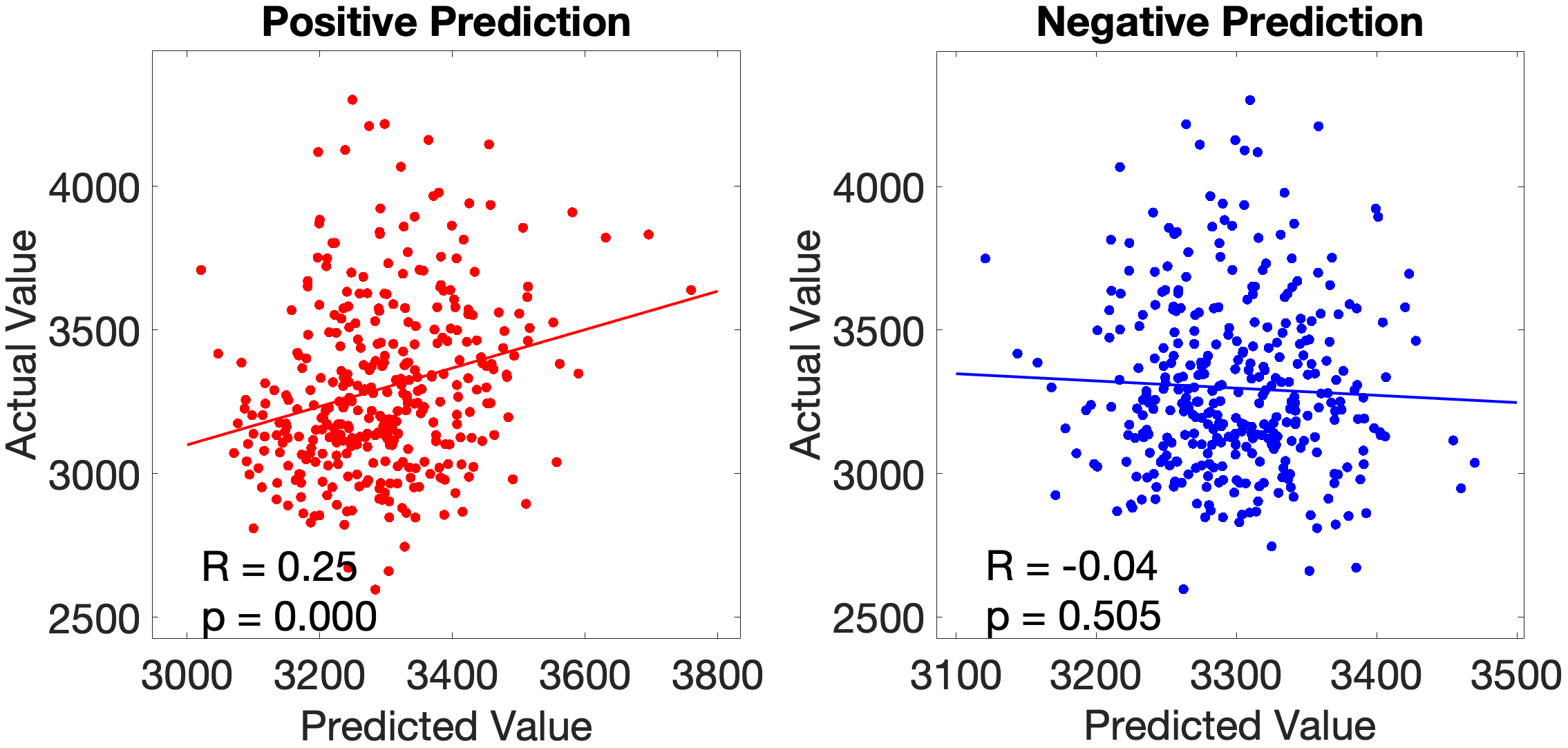

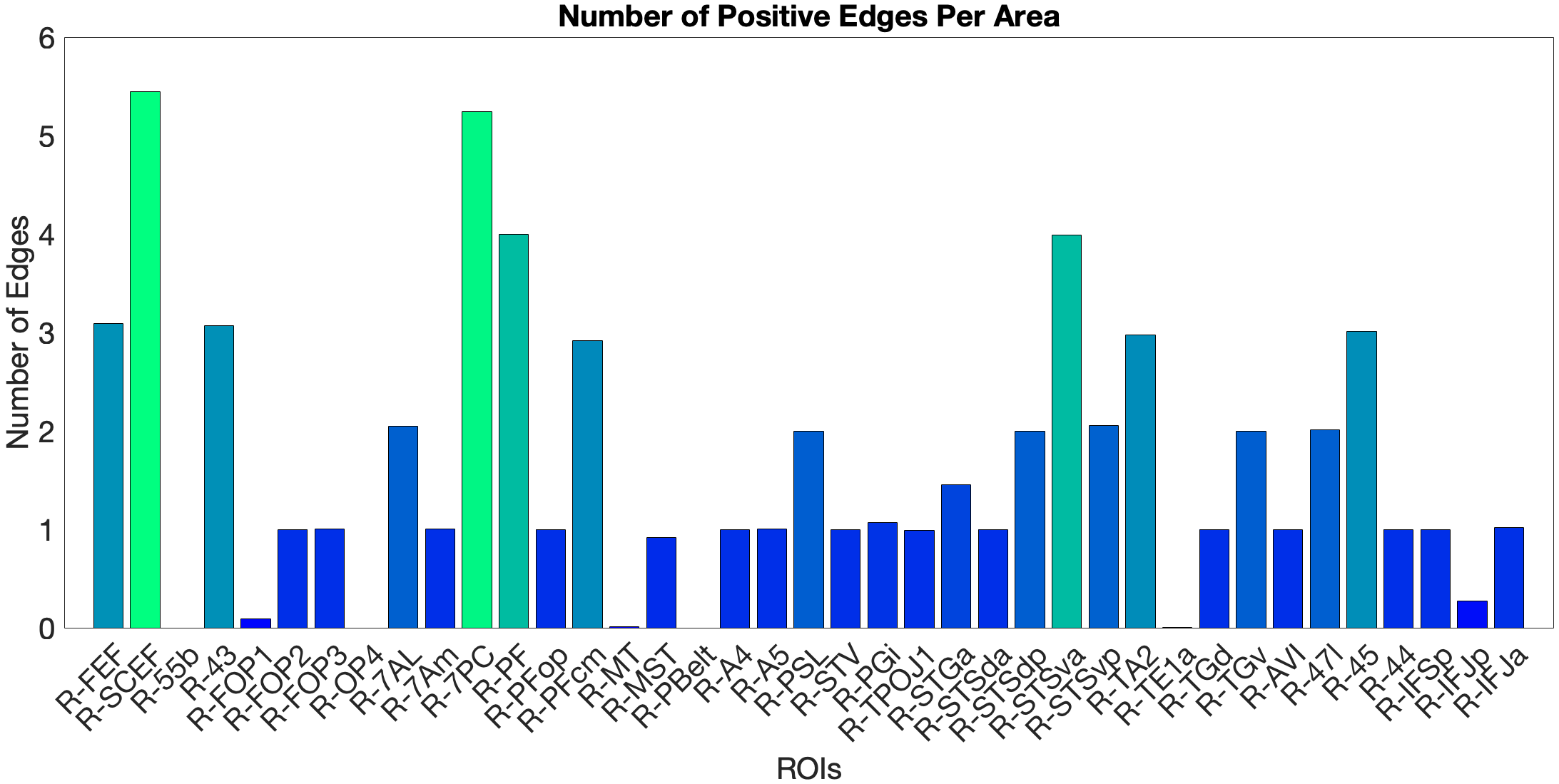

The main test of the ventral ‘what’ stream hypothesis used the Language Task Story median reaction time (Median RT). The ventral ROI model significantly predicted story Median RT (, ), with areas A5 and STGa as the strongest positive predictors, meaning that stronger resting-state coupling to areas A5 and STGa was associated with faster response times. The dorsal ROI model did not reach significance (, n.s.). This provides a clean dissociation: the semantic ‘what’ network predicts language comprehension speed, while the spatial ‘where’ network does not (Figure 4.X).

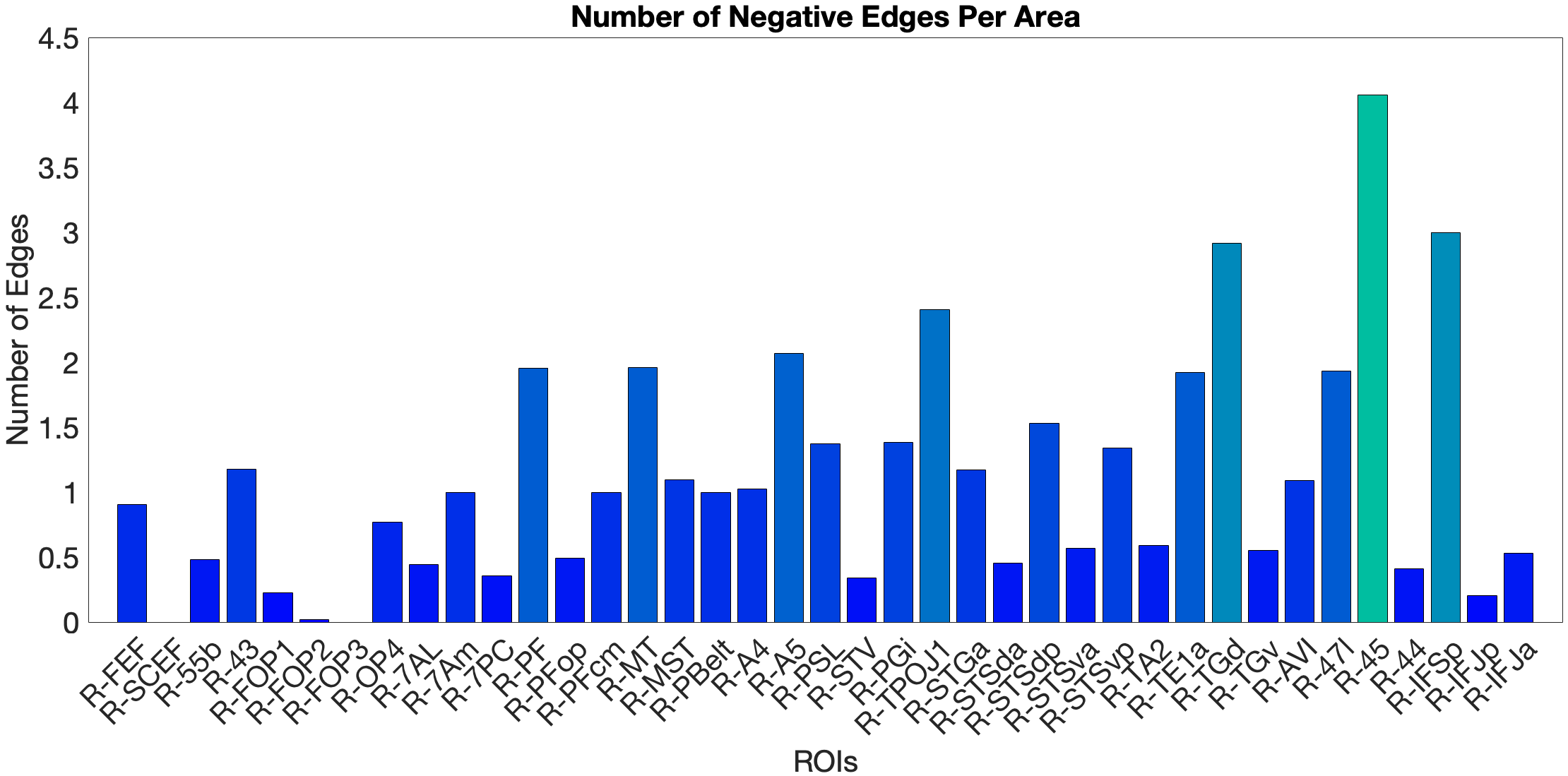

Figure 4.X: Number of predictive edges per area for the ventral ROI model predicting Language Story Median Reaction Time (right hemisphere; partial correlation, K = 371 leave-one-out cross-validation). Each bar represents the number of resting-state functional connectivity edges involving that area that contributed to the cross-validated prediction. Areas A5 and STGa emerge as the strongest positive predictors, meaning that stronger resting-state coupling of these areas to the ventral network is associated with faster semantic processing speed. The dorsal ROI model did not reach significance (R = 0.04, n.s.), confirming that this behavioural prediction is specific to the ‘what’-stream network.

Figure 4.X: Cross-validated p-values for the Language Story Median RT prediction, ventral ROI model (right hemisphere; partial correlation, K = 371). Bars indicate significance of the cross-validated correlation per area, confirming that the ventral stream prediction is robust across leave-one-out cross-validation folds.

On the other hand, the story accuracy (Acc) shows different results. The full network predicted story Acc (, ) and both the ventral (, ) and dorsal (, ) submodels reached significance. Interestingly, area 45 presents itself as a negative predictor for accuracy, meaning stronger Area 45 coupling was associated with lower accuracy. The ‘where’-stream’s accuracy was driven by areas 43 and PF (Figure 4.Y).

Figure 4.Y: Number of predictive edges per area for all ROI model predicting Language Story Accuracy (right hemisphere; partial correlation, K = 371 leave-one-out cross-validation). Area 45 emerges as a negative predictor, meaning stronger resting-state coupling to Area 45 is associated with lower story comprehension accuracy — consistent with an interference effect in which engagement of deep syntactic processing disrupts comprehension of semantically simple narratives. Area STSva contributes as the main positive predictor. Both the dorsal (R = 0.24, p < .001) and full-network (R = 0.38, p < .001) models also reached significance, indicating that story accuracy — unlike Median RT — reflects distributed network contributions rather than ventral-stream specificity alone.

4.6.2 Acoustic Signal Filtering (Noise Comparison)

As a control task for low-level acoustic signal filtering, we chose the NIH Toolbox Noise Comparison. The ‘what’-pathway fails to predict this task (n.s.). In the full-network model (, ) and the ‘where’-stream model (, ), we observed significant predictions. In the full-network model, area PFcm was the dominant positive predictor, with FOP3 as the main negative predictor. The ‘where’-stream submodel showed a different pattern: OP4 was the main positive predictor, while FOP3, 7AL and PBelt were the negative ones. Both models show leading predictors in opercular and parietal rather than prefrontal areas. This means RSFC in the semantic network does not generalise to acoustic noise exclusion.

4.6.3 Predicting Visuo-Spatial Working Memory

We tested the dorsal ‘where’-stream hypothesis against Working Memory Task Place accuracy (Place Acc) and reaction time (Place RT). In the full-network model (, ), SCEF and AVI were the strongest positive predictors. Notably, FEF did not emerge as a significant predictor — a null result given its hypothesised role as the primary dorsal prefrontal hub (Salmi (2009), see section 2.2.3). The dorsal submodel yielded a negative cross-validated R (, ), driven by areas 7AL and 7Am. The ventral submodel predicted Place Acc positively (, ), driven by area 47l.

For Place RT, the dorsal model produced an artefactual result () due to matrix rank collapse and is excluded from interpretation (see section 5.7). The ventral model yielded a marginally significant prediction (, ), with STSda as positive and IFJa as negative predictor. The full-network model (, ) identified MT and TA2 as negative and PSL as positive predictor.

An overview of cross-validated prediction results across all tasks and models is provided in Table 4.X. A complete listing of all target regions of interest with their final stream assignments — including areas reclassified on the basis of partial correlation results (Sections 4.4.1–4.4.3) — is provided in Appendix Table A2.

Table 4.X: Cross-validated prediction of behavioural performance from resting-state functional connectivity (right hemisphere; partial correlation, K = 371). †Dorsal WM Place RT model excluded due to matrix rank collapse.

| Task | Model | R | p | Key predictors |

|---|---|---|---|---|

| Language Story Median RT | Ventral | 0.18 | .001 | A5, STGa (+) |

| Language Story Median RT | Dorsal | 0.04 | n.s. | — |

| Language Story Median RT | Full | 0.07 | n.s. | — |

| Language Story Acc | Ventral | 0.32 | <.001 | STSva (+), 45 (−) |

| Language Story Acc | Dorsal | 0.24 | <.001 | 43, PF (+) |

| Language Story Acc | Full | 0.38 | <.001 | SCEF, 7PC (+), 45 (−) |

| Noise Comparison | Ventral | −0.07 | n.s. | — |

| Noise Comparison | Dorsal | 0.17 | .001 | OP4 (+), FOP3, 7AL, PBelt (−) |

| Noise Comparison | Full | 0.22 | <.001 | PFcm (+), FOP3 (−) |

| WM Place Acc | Ventral | 0.20 | <.001 | 47l (+) |

| WM Place Acc | Dorsal | −0.15 | .004 | 7AL, 7Am (+) |

| WM Place Acc | Full | 0.14 | .007 | SCEF, AVI (+) |

| WM Place RT | Ventral | −0.11 | .035 | STSda (+), IFJa (−) |

| WM Place RT | Dorsal† | — | — | — |

| WM Place RT | Full | −0.20 | <.001 | PSL (+), MT, TA2 (−) |

5.0 Discussion

5.1 Resting-State Support for a Supramodal Prefrontal Architecture

Our central hypothesis is supported by our RSFC results (Sections 4.1–4.3), where FEF couples preferentially with auditory-spatial and motion-sensitive areas - the ‘where’-stream. Meanwhile, IFJa couples selectively with the ‘what’-stream, with temporal-semantic and language-related areas. Applying single-seed and comparative partial correlation analyses, we observe a dissociation analogous to the spatial vs. non-spatial segregation previously established by Bedini & Baldauf (2021), suggesting FEF and IFJa as prefrontal control hubs not only for the visual but also for the auditory domain.

5.1.1 The FEF as an Auditory-Spatial Controller

The partial correlation results are consistent with the established role as a spatial attention controller and extend into the auditory domain. Rather than projecting to SPL regions typically associated with visual attention (7PC, 7Am, 7AL), the FEF couples selectively with the IPL, specifically PF and PFcm, and with the motion-sensitive area MST. Through this coupling, the FEF forms a clear auditory-spatial pathway with the IPL and MST, while the SPL connections vanish in partial correlation - unlike in visual analyses with FEF Bedini & Baldauf (2021). The FEF coupling with STV and TPOJ1 suggests that these regions function as multimodal convergence hubs (Rolls (2022) - NeuroImage). Alongside this ‘where’-coupling, the FEF also exhibits decoupling from all ventral temporal areas (e.g. TE1a, TA2, TGd).

This pattern aligns with the functional logic described by Rauschecker & Scott (2009) - Nature Neuroscience, who reviewed evidence that the posterodorsal auditory stream links posterior superior temporal cortex and parietal areas for spatial processing, with activation in regions adjacent to MT/MST specifically for auditory motion. Task-based fMRI supports this: Salmi (2009) demonstrated that top-down controlled shifts of auditory spatial attention recruit FEF/PMC alongside SPL and IPS, serving as evidence for FEF’s functional relevance in auditory spatial orienting. Our resting-state data extend this to the prefrontal level, suggesting that the FEF performs control over auditory spatial orienting, which operates within a multisensory framework (Rauschecker & Scott (2009) - Nature Neuroscience).

5.1.2 The IFJa as a Semantic-Auditory Controller

As shown in Section 4.3, the partial correlation pattern of the IFJa reveals a complementary picture to the FEF, coupling selectively with STS regions (STSdp, STSda), Broca’s areas BA44 and BA45, and early auditory association areas A4 and A5, but not exhibiting substantial coupling with parietal spatial areas. In direct contrast to FEF, this pattern is language- and object-identity-focused, and decouples from spatially parietal auditory regions.

This selectivity is consistent with the IFJa’s network and functional characterisation. Based on the resting-state parcellation of Ji et al. (2019, as reviewed in Bedini & Baldauf (2021)), the IFJa is assigned to the language network, placing it within the non-spatial semantic-language-domain. The results suggest that IFJa may perform top-down attention for auditory feature-extraction, object identity and semantic processing. The coupling with the Broca’s areas could also imply a role in the auditory object-related working memory system Bedini & Baldauf (2021). This is further consistent with the finding that top-down prefrontal control over auditory object processing is implemented via anticipatory alpha oscillations (De Vries & Baldauf (2021) - Journal of Neuroscience).

5.1.3 The How-Stream: FEF, 55b, and Auditory-Motor Integration

As shown in Section 4.2.2, the FEF’s strongest partial correlations are not with auditory regions at all, but with premotor-opercular regions 55b, SCEF, FOP1, and 43. This premotor pattern raises the question of whether the FEF performs auditory spatial control directly or is part of a broader network for auditory-motor integration. Hickok & Poeppel 2007 - Nature argued that the dorsal stream primarily serves auditory-motor integration for translating acoustic speech signals into articulatory motor plans. Our data are consistent with this view at the prefrontal level (Sections 4.2.1, 4.2.4): the FEF maintains spatial coupling with the IPL and MST, while its premotor connections via 55b and FOP1 suggest how-stream functions.

Seed-specificity analysis (Section 4.5) further supports this dissociation. When 55b is used as seed in comparison with FEF, it couples directly with early auditory association areas A4 and A5, connections absent from the FEF’s partial profile. This divergence suggests that 55b functions as the auditory-motor relay for early acoustic features (Dureux (2024)), while the FEF operates on a more abstract spatial coordinate system. Therefore, the FEF may serve as the pure spatial controller, supplying abstract coordinates to the 55b-anchored how-stream without engaging in low-level acoustic processing itself.

→ und hier nochmal single seed 55b anschauen

5.1.4 A Supramodal Prefrontal Architecture